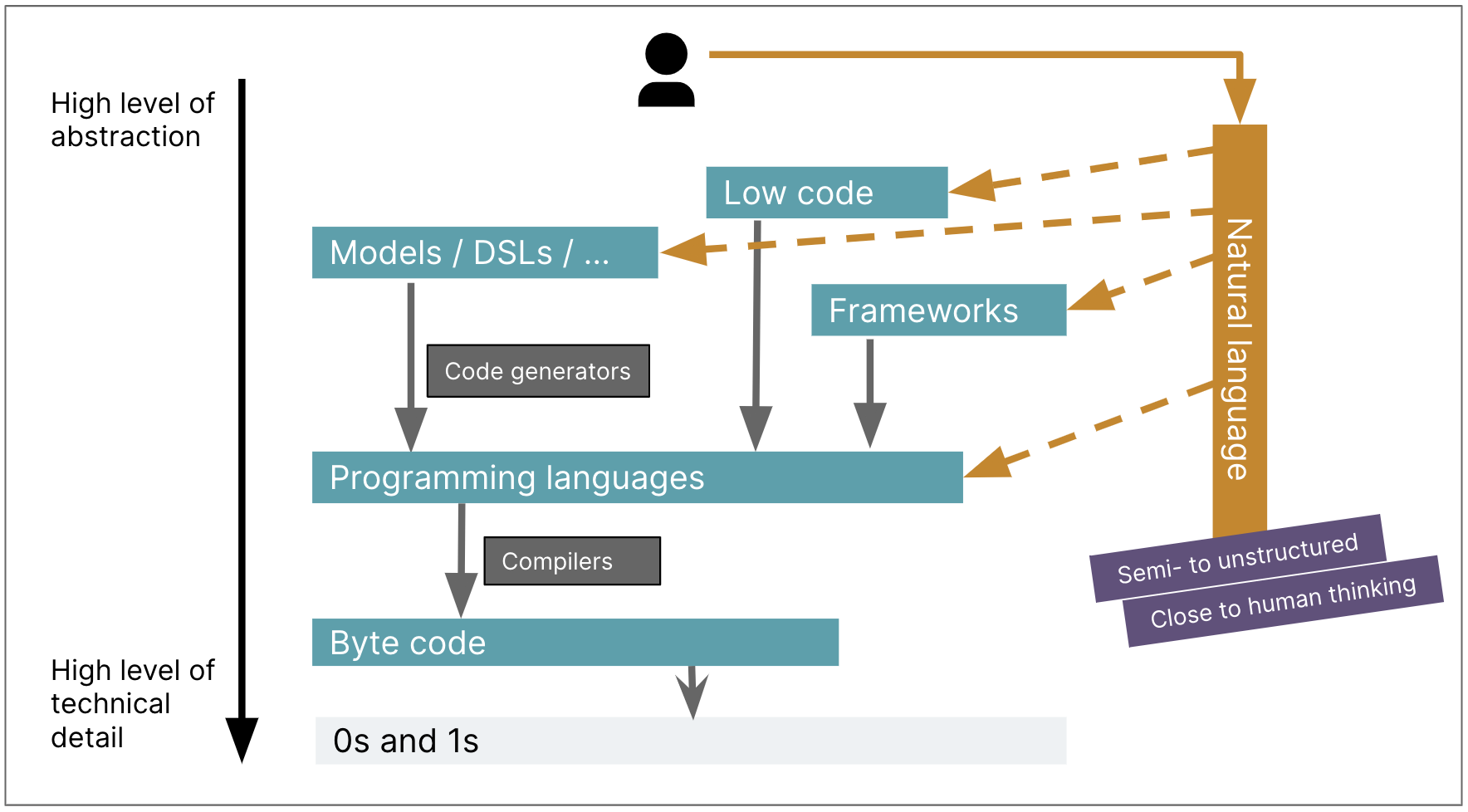

Like most loudmouths on this discipline, I have been paying loads of consideration

to the position that generative AI programs could play in software program growth. I

assume the looks of LLMs will change software program growth to an analogous

diploma because the change from assembler to the primary high-level programming

languages. The additional growth of languages and frameworks elevated our

abstraction stage and productiveness, however did not have that type of influence on

the nature of programming. LLMs are making that diploma of influence, however with

the excellence that it’s not simply elevating the extent of abstraction, however

additionally forcing us to think about what it means to program with non-deterministic

instruments.

Excessive-Degree Languages (HLLs) launched a radically new stage of abstraction. With assembler I am

serious about the instruction set of a specific machine. I’ve to determine

out how you can do even easy actions by transferring knowledge into the fitting registers to

invoke these particular actions. HLLs meant I might now assume by way of

sequences of statements, conditionals to decide on between options, and

iteration to repeatedly apply statements to collections of knowledge values. I

can introduce names into many points of my code, making it clear what

values are speculated to characterize. Early languages definitely had their

limitations. My first skilled programming was in Fortran IV, the place “IF”

statements did not have an “ELSE” clause, and I needed to bear in mind to call my

integer variables so that they began with the letters “I” via “N”.

Stress-free such restrictions and gaining block construction (“I can have extra

than one assertion after my IF”) made my programming simpler (and extra enjoyable)

however are the identical type of factor. Now I rarely write loops, I

instinctively cross capabilities as knowledge – however I am nonetheless speaking to the machine

in an analogous approach than I did all these days in the past on the Dorset moors with

Fortran. Ruby is a much more refined language than Fortran, but it surely has

the identical ambiance, in a approach that Fortran and PDP-11 machine directions do

not.

To this point I’ve not had the chance to do greater than dabble with the

greatest Gen-AI instruments, however I am fascinated as I hearken to associates and

colleagues share their experiences. I am satisfied that that is one other

elementary change: speaking to the machine in prompts is as totally different to

Ruby as Fortran to assembler. However that is greater than an enormous leap in

abstraction. After I wrote a Fortran perform, I might compile it 100

occasions, and the outcome nonetheless manifested the very same bugs. Massive Language Fashions introduce a

non-deterministic abstraction, so I am unable to simply retailer my prompts in git and

know that I will get the identical conduct every time. As my colleague

Birgitta put it, we’re not simply transferring up the abstraction ranges,

we’re transferring sideways into non-determinism on the similar time.

illustration: Birgitta Böckeler

As we study to make use of LLMs in our work, we’ve to determine how you can

dwell with this non-determinism. This modification is dramatic, and relatively excites

me. I am certain I will be unhappy at some issues we’ll lose, however there may also

issues we’ll achieve that few of us perceive but. This evolution in

non-determinism is unprecedented within the historical past of our career.