Picture by Creator

ComfyUI has modified how creators and builders strategy AI-powered picture era. In contrast to conventional interfaces, the node-based structure of ComfyUI offers you unprecedented management over your artistic workflows. This crash course will take you from a whole newbie to a assured person, strolling you thru each important idea, characteristic, and sensible instance that you must grasp this highly effective device.

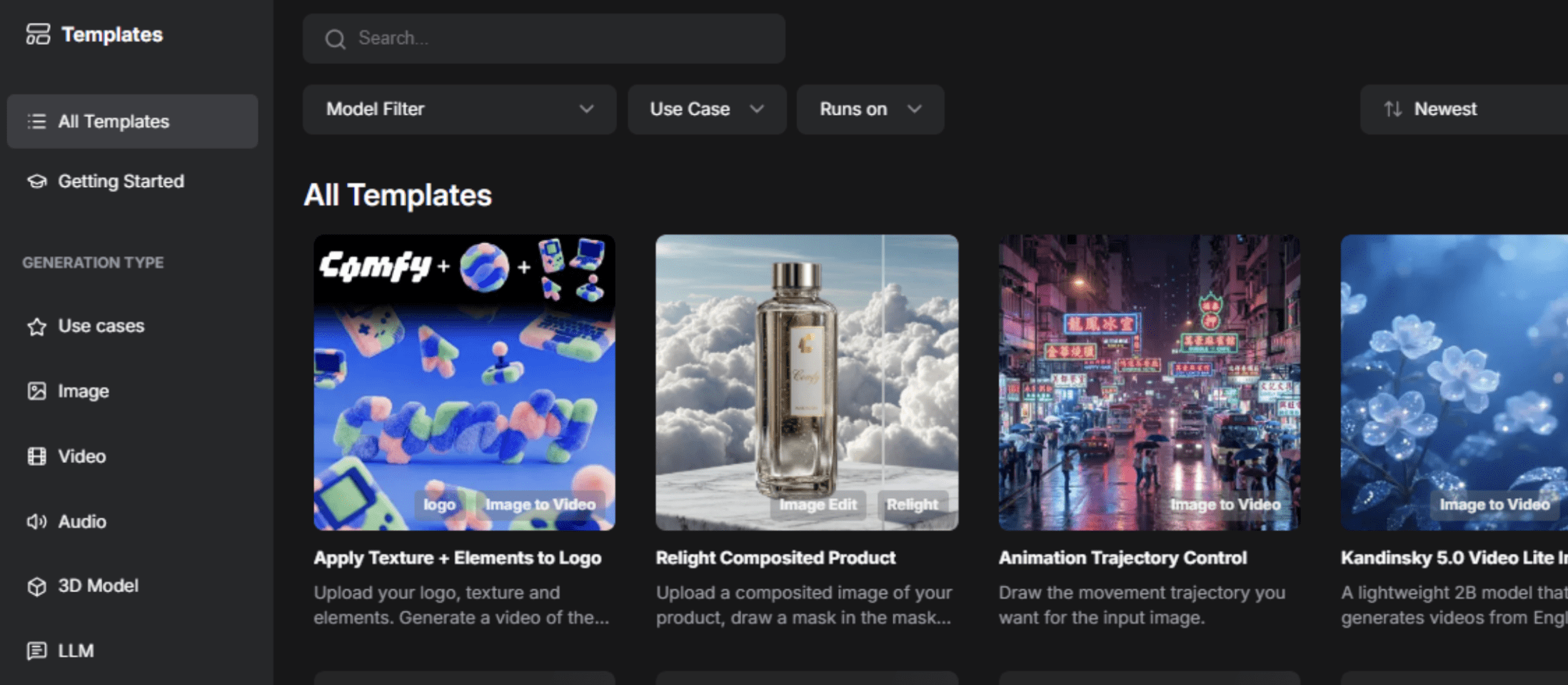

Picture by Creator

ComfyUI is a free, open-source, node-based interface and the backend for Secure Diffusion and different generative fashions. Consider it as a visible programming setting the place you join constructing blocks (referred to as “nodes”) to create advanced workflows for producing photographs, movies, 3D fashions, and audio.

Key benefits over conventional interfaces:

- You will have full management to construct workflows visually with out writing code, with full management over each parameter.

- It can save you, share, and reuse whole workflows with metadata embedded within the generated information.

- There aren’t any hidden prices or subscriptions; it’s fully customizable with customized nodes, free, and open supply.

- It runs regionally in your machine for quicker iteration and decrease operational prices.

- It has prolonged performance, which is sort of infinite with customized nodes that may meet your particular wants.

# Selecting Between Native and Cloud-Based mostly Set up

Earlier than exploring ComfyUI in additional element, you need to determine whether or not to run it regionally or use a cloud-based model.

| Native Set up | Cloud-Based mostly Set up |

|---|---|

| Works offline as soon as put in | Requires a continuing web connection |

| No subscription charges | Might contain subscription prices |

| Full information privateness and management | Much less management over your information |

| Requires highly effective {hardware} (particularly NVIDIA GPU) | No highly effective {hardware} required |

| Guide set up and updates required | Computerized updates |

| Restricted by your pc’s processing energy | Potential pace limitations throughout peak utilization |

In case you are simply beginning, it is suggested to start with a cloud-based answer to be taught the interface and ideas. As you develop your abilities, contemplate transitioning to a neighborhood set up for higher management and decrease long-term prices.

# Understanding the Core Structure

Earlier than working with nodes, it’s important to know the theoretical basis of how ComfyUI operates. Consider it as a multiverse between two universes: the crimson, inexperienced, blue (RGB) universe (what we see) and the latent house universe (the place computation occurs).

// The Two Universes

The RGB universe is our observable world. It accommodates common photographs and information that we are able to see and perceive with our eyes. The latent house (AI universe) is the place the “magic” occurs. It’s a mathematical illustration that fashions can perceive and manipulate. It’s chaotic, full of noise, and accommodates the summary mathematical construction that drives picture era.

// Utilizing the Variational Autoencoder

The variational autoencoder (VAE) acts as a portal between these universes.

- Encoding (RGB — Latent) takes a visual picture and converts it into the summary latent illustration.

- Decoding (Latent — RGB) takes the summary latent illustration and converts it again to a picture we are able to see.

This idea is essential as a result of many nodes function inside a single universe, and understanding it should provide help to join the suitable nodes collectively.

// Defining Nodes

Nodes are the basic constructing blocks of ComfyUI. Every node is a self-contained perform that performs a particular process. Nodes have:

- Inputs (left facet): The place information flows in

- Outputs (proper facet): The place processed information flows out

- Parameters: Settings you alter to manage the node’s conduct

// Figuring out Colour-Coded Knowledge Sorts

ComfyUI makes use of a colour system to point what sort of information flows between nodes:

| Colour | Knowledge Kind | Instance |

|---|---|---|

| Blue | RGB Photographs | Common seen photographs |

| Pink | Latent Photographs | Photographs in latent illustration |

| Yellow | CLIP | Textual content transformed to machine language |

| Crimson | VAE | Mannequin that converts between universes |

| Orange | Conditioning | Prompts and management directions |

| Inexperienced | Textual content | Easy textual content strings (prompts, file paths) |

| Purple | Fashions | Checkpoints and mannequin weights |

| Teal/Turquoise | ControlNets | Management information for guiding era |

Understanding these colours is essential. They inform you immediately whether or not nodes can join to one another.

// Exploring Necessary Node Sorts

Loader nodes import fashions and information into your workflow:

CheckPointLoader: Masses a mannequin (usually containing the mannequin weights, Contrastive Language-Picture Pre-training (CLIP), and VAE in a single file).Load Diffusion Mannequin: Masses mannequin parts individually (for newer fashions like Flux that don’t bundle parts).VAE Loader: Masses the VAE decoder individually.CLIP Loader: Masses the textual content encoder individually.

Processing nodes rework information:

CLIP Textual content Encodeconverts textual content prompts into machine language (conditioning).KSampleris the core picture era engine.VAE Decodeconverts latent photographs again to RGB.

Utility nodes assist workflow administration:

- Primitive Node: Permits you to enter values manually.

- Reroute Node: Cleans up workflow visualization by redirecting connections.

- Load Picture: Imports photographs into your workflow.

- Save Picture: Exports generated photographs.

# Understanding the KSampler Node

The KSampler is arguably an important node in ComfyUI. It’s the “robotic builder” that truly generates your photographs. Understanding its parameters is essential for creating high quality photographs.

// Reviewing KSampler Parameters

Seed (Default: 0)

The seed is the preliminary random state that determines which random pixels are positioned initially of era. Consider it as your start line for randomization.

- Fastened Seed: Utilizing the identical seed with the identical settings will at all times produce the identical picture.

- Randomized Seed: Every era will get a brand new random seed, producing totally different photographs.

- Worth Vary: 0 to 18,446,744,073,709,551,615.

Steps (Default: 20)

Steps outline the variety of denoising iterations carried out. Every step progressively refines the picture from pure noise towards your required output.

- Low Steps (10-15): Sooner era, much less refined outcomes.

- Medium Steps (20-30): Good steadiness between high quality and pace.

- Excessive Steps (50+): Higher high quality however considerably slower.

CFG Scale (Default: 8.0, Vary: 0.0-100.0)

The classifier-free steering (CFG) scale controls how strictly the AI follows your immediate.

Analogy — Think about giving a builder a blueprint:

- Low CFG (3-5): The builder glances on the blueprint then does their very own factor — artistic however might ignore directions.

- Excessive CFG (12+): The builder obsessively follows each element of the blueprint — correct however might look stiff or over-processed.

- Balanced CFG (7-8 for Secure Diffusion, 1-2 for Flux): The builder largely follows the blueprint whereas including pure variation.

Sampler Title

The sampler is the algorithm used for the denoising course of. Widespread samplers embody Euler, DPM++ 2M, and UniPC.

Scheduler

Controls how noise is scheduled throughout the denoising steps. Schedulers decide the noise discount curve.

- Regular: Customary noise scheduling.

- Karras: Typically offers higher outcomes at decrease step counts.

Denoise (Default: 1.0, Vary: 0.0-1.0)

That is considered one of your most essential controls for image-to-image workflows. Denoise determines what share of the enter picture to interchange with new content material:

- 0.0: Don’t change something — output might be an identical to enter

- 0.5: Maintain 50% of the unique picture, regenerate 50% as new

- 1.0: Utterly regenerate — ignore the enter picture and begin from pure noise

# Instance: Producing a Character Portrait

Immediate: “A cyberpunk android with neon blue eyes, detailed mechanical components, dramatic lighting.”

Settings:

- Mannequin: Flux

- Steps: 20

- CFG: 2.0

- Sampler: Default

- Decision: 1024×1024

- Seed: Randomize

Unfavourable immediate: “low high quality, blurry, oversaturated, unrealistic.”

// Exploring Picture-to-Picture Workflows

Picture-to-image workflows construct on the text-to-image basis, including an enter picture to information the era course of.

Situation: You will have {a photograph} of a panorama and wish it in an oil portray type.

- Load your panorama picture

- Optimistic Immediate: “oil portray, impressionist type, vibrant colours, brush strokes”

- Denoise: 0.7

// Conducting Pose-Guided Character Era

Situation: You generated a personality you’re keen on however need a totally different pose.

- Load your authentic character picture

- Optimistic Immediate: “Identical character description, standing pose, arms at facet”

- Denoise: 0.3

# Putting in and Setting Up ComfyUI

Cloud-Based mostly (Best for Newbies)

Go to RunComfy.com and click on on launch Cozy Cloud on the high right-hand facet. Alternatively, you may merely join in your browser.

Picture by Creator

Picture by Creator

// Utilizing Home windows Transportable

- Earlier than you obtain, you need to have a {hardware} setup together with an NVIDIA GPU with CUDA assist or macOS (Apple Silicon).

- Obtain the transportable Home windows construct from the ComfyUI GitHub releases web page.

- Extract to your required location.

- Run

run_nvidia_gpu.bat(if in case you have an NVIDIA GPU) orrun_cpu.bat. - Open your browser to http://localhost:8188.

// Performing Guide Set up

- Set up Python: Obtain model 3.12 or 3.13.

- Clone Repository:

git clone https://github.com/comfyanonymous/ComfyUI.git - Set up PyTorch: Comply with platform-specific directions to your GPU.

- Set up Dependencies:

pip set up -r necessities.txt - Add Fashions: Place mannequin checkpoints in

fashions/checkpoints. - Run:

python predominant.py

# Working With Totally different AI Fashions

ComfyUI helps quite a few state-of-the-art fashions. Listed below are the present high fashions:

| Flux (Really useful for Realism) | Secure Diffusion 3.5 | Older Fashions (SD 1.5, SDXL) |

|---|---|---|

| Glorious for photorealistic photographs | Nicely-balanced high quality and pace | Extensively fine-tuned by the group |

| Quick era | Helps numerous kinds | Huge low-rank adaptation (LoRA) ecosystem |

| CFG: 1-3 vary | CFG: 4-7 vary | Nonetheless wonderful for particular workflows |

# Advancing Workflows With Low-Rank Variations

Low-rank diversifications (LoRAs) are small adapter information that fine-tune fashions for particular kinds, topics, or aesthetics with out modifying the bottom mannequin. Widespread makes use of embody character consistency, artwork kinds, and customized ideas. To make use of one, add a “Load LoRA” node, choose your file, and join it to your workflow.

// Guiding Picture Era with ControlNets

ControlNets present spatial management over era, forcing the mannequin to respect pose, edge maps, or depth:

- Pressure particular poses from reference photographs

- Preserve object construction whereas altering type

- Information composition based mostly on edge maps

- Respect depth data

// Performing Selective Picture Enhancing with Inpainting

Inpainting means that you can regenerate solely particular areas of a picture whereas preserving the remaining intact.

Workflow: Load picture — Masks portray — Inpainting KSampler — End result

// Rising Decision with Upscaling

Use upscale nodes after era to extend decision with out regenerating the whole picture. Common upscalers embody RealESRGAN and SwinIR.

# Conclusion

ComfyUI represents an important shift in content material creation. Its node-based structure offers you energy beforehand reserved for software program engineers whereas remaining accessible to newcomers. The training curve is actual, however each idea you be taught opens new artistic potentialities.

Start by making a easy text-to-image workflow, producing some photographs, and adjusting parameters. Inside weeks, you’ll be creating refined workflows. Inside months, you’ll be pushing the boundaries of what’s potential within the generative house.

Shittu Olumide is a software program engineer and technical author obsessed with leveraging cutting-edge applied sciences to craft compelling narratives, with a eager eye for element and a knack for simplifying advanced ideas. You may as well discover Shittu on Twitter.