AI brokers are actually working inside manufacturing programs, querying Snowflake, updating Salesforce, and executing enterprise logic autonomously. In lots of enterprises, they authenticate utilizing static API keys or shared credentials reasonably than distinct identities within the company IDP.

Authenticating autonomous programs by means of shared credentials introduces actual governance danger.

When an agent executes an motion, logs usually attribute it to a developer key or service account as an alternative of a clearly outlined autonomous actor. Attribution turns into ambiguous. Least privilege weakens. Revocation might require rotating credentials or modifying code reasonably than disabling a ruled id. In a non-deterministic surroundings, that delay slows investigation and containment.

Shared credentials flip autonomous programs into “shadow identities”: actors working inside manufacturing and not using a distinct, ruled id within the enterprise listing.

Most organizations have monitoring and guardrails in place. The problem is structural. Autonomous programs are working outdoors first-class id governance throughout the identical management aircraft that secures human customers. Closing this hole requires aligning brokers with the id mannequin that governs your workforce, making certain each autonomous actor is traceable, permission scoped, and centrally revocable.

The hidden danger: Trendy agentic AI is non-deterministic

Conventional enterprise software program follows predefined logic. Given the identical enter, it produces the identical output.

Agentic AI programs function in another way. As an alternative of executing a hard and fast script, they use probabilistic fashions to:

- Consider context

- Retrieve data dynamically

- Assemble motion paths in actual time

When you instruct an agent to optimize a provide chain route, it could reference climate forecasts, gasoline price information, and historic efficiency earlier than figuring out a route. That flexibility allows brokers to unravel advanced, multi-system issues that conventional software program can’t deal with.

Nevertheless, non-deterministic programs introduce new governance issues:

- Execution paths might differ from one request to the subsequent.

- Retrieved information sources might differ relying on context.

- Outputs can comprise reasoning errors or inaccurate conclusions.

- Actions might lengthen past what a developer explicitly scripted.

When a system can repeatedly entry firm information and execute actions autonomously, it can’t be ruled like a static utility. It requires clear id attribution, tightly scoped permissions, steady monitoring, and centralized revocation authority.

Why credential-based safety breaks in agentic environments

Most enterprises nonetheless safe AI brokers utilizing static API keys or shared service credentials. That mannequin labored when software program executed predictable logic. It breaks down when autonomous programs function throughout manufacturing environments.

When an agent authenticates with a shared credential, exercise is logged however not clearly attributed. A Salesforce replace or Snowflake question might seem to originate from a developer key reasonably than from a definite autonomous system. Attribution turns into blurred. Least privilege is more durable to implement. Containment relies on rotating credentials or modifying code as an alternative of disabling a ruled id.

The issue is id governance, not monitoring visibility.

Conventional safety assumes credentials map to accountable customers or providers. Shared credentials break that assumption. In a non-deterministic surroundings, that ambiguity slows investigation and will increase publicity.

The strategic shift: Id-first governance

The governance hole created by shadow identities can’t be solved with further monitoring. It requires a structural shift in how autonomous programs are ruled.

When a system can dynamically retrieve information, generate probabilistic outputs, and execute actions throughout enterprise platforms, it’s not simply an utility. It’s an operational actor. Governance should mirror that.

Id-first governance treats autonomous programs as first-class identities throughout the identical listing that governs human customers. Every agent receives a definite id, clearly scoped permissions, and auditable exercise attribution.

This modifications the management mannequin. Entry is tied to id reasonably than static credentials. Actions are logged to a particular actor. Permissions could be adjusted with out modifying code. Revocation happens on the id layer, not inside utility logic.

The result’s a unified id aircraft for human and autonomous actors. As an alternative of constructing parallel AI safety stacks, organizations lengthen present id controls. Coverage stays constant. Incident response stays centralized. Innovation scales with out fragmenting governance.

A sensible instance: Id backed brokers in follow

One architectural response to the id governance hole is to provision autonomous programs as first-class identities inside the company listing, reasonably than authenticating them by means of static API keys.

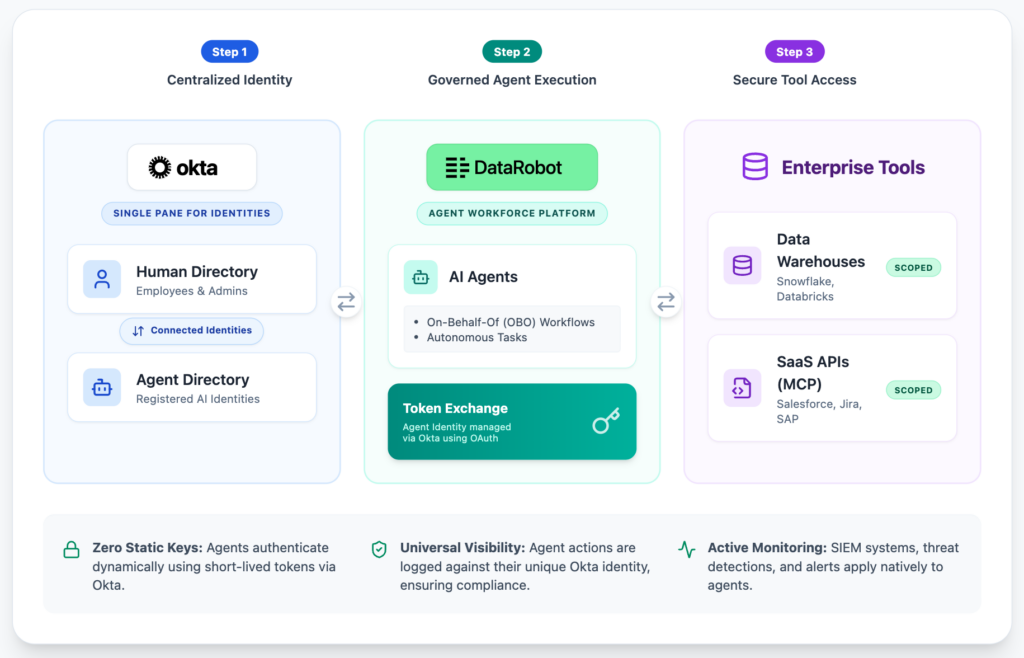

This method requires coordination between agent orchestration and enterprise id infrastructure. By way of a deep integration between DataRobot and Okta, organizations can now provision brokers constructed within the DataRobot Agentic Workforce Platform as ruled, first-class identities immediately inside Okta. Brokers deployed throughout the DataRobot Agentic Workforce Platform could be provisioned as ruled identities inside Okta as an alternative of counting on shared credentials.

On this mannequin, every agent receives a listing backed id. Authentication happens by means of brief lived, coverage managed tokens reasonably than lengthy lived credentials embedded in code. Actions are logged to a particular autonomous actor. Permissions are scoped utilizing present least privilege controls.

This immediately addresses the attribution and revocation challenges described earlier. When an agent is deployed, its id is created throughout the company IDP. When permissions change, governance workflows apply. If habits deviates from expectation, safety groups can limit or disable the agent on the id layer, instantly adjusting its entry throughout built-in programs corresponding to Salesforce or Snowflake.

The affect is operational. Autonomous programs change into seen actors inside the identical id aircraft that secures human customers. Relatively than introducing a parallel AI safety stack, organizations lengthen the controls they already function and audit.

Three governance ideas for agentic AI

As autonomous programs transfer into manufacturing environments, governance should change into specific. At minimal, three ideas are important.

1. Get rid of static credentials

Autonomous programs mustn’t authenticate by means of lengthy lived API keys or shared service accounts. Manufacturing brokers should use brief lived, coverage managed credentials tied to a ruled id. If an autonomous system can entry enterprise programs, it should authenticate as a definite actor throughout the id supplier.

2. Audit the actor, not the platform

Safety logs ought to attribute actions to particular autonomous identities, to not generic providers or developer keys. In non-deterministic programs, platform stage visibility is inadequate. Governance requires actor stage attribution to help investigation, anomaly detection, and entry overview.

3. Centralize revocation authority

Safety groups should have the ability to limit or disable an autonomous system by means of the first id management aircraft. Containment mustn’t rely upon code modifications, credential rotation, or redeployment. Id should operate as an operational management floor.

Non-deterministic programs aren’t inherently unsafe. However when autonomous programs function with out id stage governance, publicity will increase. Clear id boundaries convert autonomy from a governance legal responsibility right into a manageable extension of enterprise operations.

AI governance is workforce governance

Agentic programs now function inside core workflows, entry regulated information, and execute actions with actual consequence. Governance fashions designed for deterministic software program aren’t adequate for autonomous programs.

If a system can act, it should exist as a ruled id throughout the identical management aircraft that secures your workforce. Id turns into the inspiration for attribution, least privilege, monitoring, and centralized revocation. When brokers function inside the company listing reasonably than outdoors it, oversight scales with innovation.

This mannequin is taking form by means of nearer integration between agent orchestration platforms and enterprise id suppliers, together with the collaboration between DataRobot and Okta. Relatively than constructing parallel AI safety stacks, organizations can lengthen the id infrastructure they already function to autonomous programs. To see how identity-backed brokers can function securely inside enterprise environments, discover The Enterprise Information to Agentic AI or schedule a demo to learn the way DataRobot and Okta combine agent orchestration with enterprise id governance.