TL;DR

MCP servers join LLMs to exterior instruments and knowledge sources by means of a standardized protocol. Public MCP servers present capabilities akin to net search, GitHub entry, database queries, and browser automation by means of structured instrument definitions.

These servers sometimes run as long-lived stdio processes that reply to instrument invocation requests. To make use of them reliably in functions or share them throughout groups, they must be deployed as steady, accessible endpoints.

Clarifai permits MCP servers to be deployed as managed endpoints. The platform runs the configured MCP course of, handles lifecycle administration, discovers obtainable instruments, and exposes them by means of its API.

This tutorial walks you thru how one can deploy any public MCP server. We would be utilizing the DuckDuckGo browser server as a reference implementation. The identical method applies to different stdio-based MCP servers, together with GitHub, Slack, and filesystem integrations.

DuckDuckGo Browser MCP Server

The DuckDuckGo browser MCP server is an open-source MCP implementation that exposes net search capabilities as callable instruments. It permits language fashions to carry out search queries and retrieve structured outcomes by means of the MCP protocol.

The server runs as a stdio-based course of and gives instruments akin to ddg_search for executing net searches. When invoked, the instrument returns structured search outcomes that LLMs can use to reply questions or full duties that require present net info.

We use this server because the reference implementation as a result of it doesn’t require extra secrets and techniques or exterior configuration. The one requirement is defining the MCP command in config.yaml, which makes it easy for us to deploy and check on Clarifai.

If you would like to construct a customized MCP server from scratch with your individual instruments and logic, this information walks by means of that course of utilizing FastMCP.

Now that we’ve got outlined the reference server, let’s begin.

Set Up the Surroundings

Set up the Clarifai Python SDK:

Set your Clarifai Private Entry Token as an surroundings variable. Retrieve your PAT from the safety settings in your Clarifai account.

Clone the runners-examples repository and navigate to the browser MCP server listing:

The listing accommodates the deployment information:

- config.yaml: Deployment configuration and MCP server specification

- 1/mannequin.py: Mannequin class implementation

- necessities.txt: Python dependencies

Configure the Deployment

Earlier than importing, replace config.yaml together with your Clarifai mannequin identifiers and compute settings. This file defines the mannequin metadata, MCP server startup command, and useful resource necessities. Clarifai makes use of it to begin the MCP server, allocate compute, and expose the server’s instruments by means of the mannequin endpoint.

The mcp_server part defines how the MCP server course of is began. command specifies the executable, and args lists the arguments handed to that executable. On this instance, uvx duckduckgo-mcp-server begins the DuckDuckGo MCP server as a stdio-based course of.

The mannequin implementation in 1/mannequin.py inherits from StdioMCPModelClass:

StdioMCPModelClass begins the method outlined in config.yaml, discovers the obtainable instruments by means of the MCP protocol, and exposes these instruments by means of the deployed mannequin endpoint. No extra implementation is required past inheriting from StdioMCPModelClass.

The DuckDuckGo MCP server runs on CPU and requires minimal sources.

Add & Deploy MCP Server

Add the MCP server utilizing the Clarifai CLI:

The –skip_dockerfile flag is required when importing MCP servers. This command packages the mannequin listing and uploads it to your Clarifai account.

After importing your MCP server, deploy it on compute so it will possibly run and serve instrument requests.

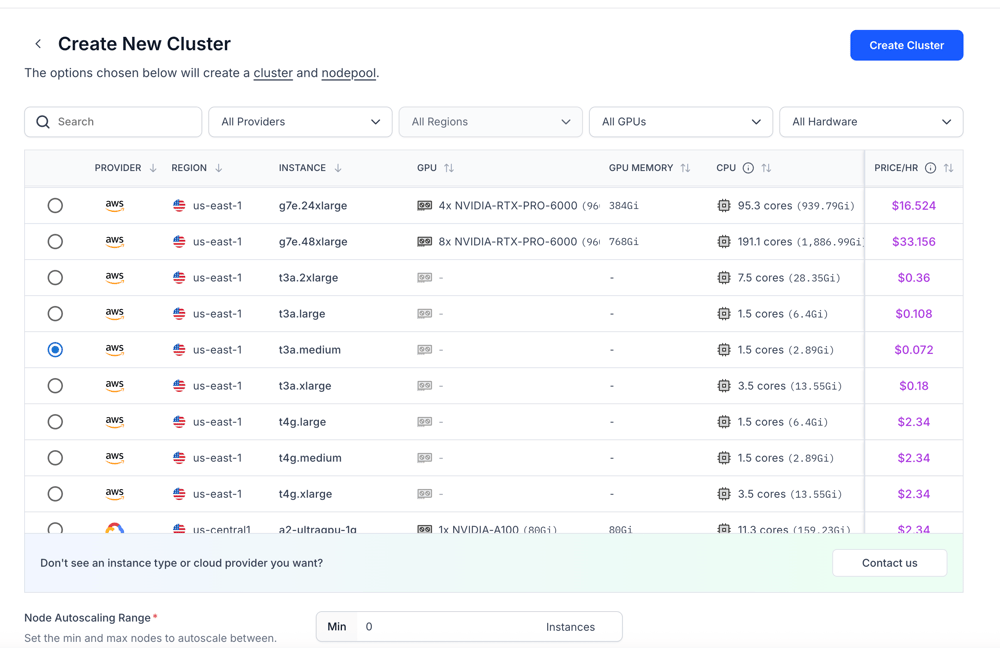

Go to the Compute part and create a brand new cluster. You will note an inventory of obtainable situations throughout completely different suppliers and areas, together with their {hardware} specs.

Every occasion exhibits:

- Supplier

- Area

- Occasion sort

- GPU and GPU reminiscence

- CPU and system reminiscence

- Hourly worth

Choose an occasion primarily based on the useful resource necessities you outlined in your config.yaml file. For instance, in case you specified sure CPU and reminiscence limits, select an occasion that satisfies or exceeds these values. Most MCP servers run as light-weight stdio processes, so GPU is usually not required except your server explicitly relies on it.

After deciding on the occasion, configure the node pool. You may set autoscaling parameters akin to minimal and most replicas primarily based in your anticipated workload.

Lastly, create the cluster and node pool, then deploy your MCP server to the chosen compute. Clarifai will begin the server utilizing the command outlined in your config.yaml and expose its instruments by means of the deployed mannequin endpoint.

You may observe the information to discover ways to create your devoted compute surroundings and deploy your MCP server to the platform.

Utilizing the Deployed MCP Server

As soon as deployed, we will work together with the MCP server utilizing the FastMCP shopper. The shopper connects to the Clarifai endpoint and discovers the obtainable instruments.

Change the URL together with your deployed MCP server endpoint.

This shopper establishes an HTTP connection to the deployed MCP endpoint and retrieves the instrument definitions uncovered by the DuckDuckGo server. The list_tools() name confirms that the server is operating and that its instruments can be found for invocation.

Combine with LLMs

The instruments uncovered by your deployed MCP server can be utilized with any LLM that helps operate calling. Configure your MCP shopper and OpenAI-compatible shopper to connect with your Clarifai MCP endpoint so the mannequin can uncover and invoke the obtainable instruments.

Your MCP server is now deployed as an API endpoint on Clarifai, and its instruments may be accessed and invoked from any suitable LLM by means of the MCP shopper.

Regularly Requested Questions (FAQs)

-

Can I deploy any MCP server utilizing this technique?

Sure. So long as the MCP server runs as a stdio-based course of, it may be outlined within the mcp_server part of config.yaml. Replace the command and arguments, add the mannequin, and the server will likely be uncovered by means of its personal endpoint.

-

Do MCP servers require Docker to deploy?

No. When importing MCP servers utilizing the Clarifai CLI, the –skip_dockerfile flag permits the deployment with out requiring a customized Dockerfile.

-

Can I exploit deployed MCP servers with any LLM?

Sure. Any LLM that helps operate calling or instrument calling can use the instruments uncovered by a deployed MCP server. The instruments have to be formatted based on the mannequin’s operate calling schema.

-

Do MCP servers require API keys?

It relies on the server implementation. Some public MCP servers, such because the DuckDuckGo instance used on this information, don’t require extra secrets and techniques. Others might require API credentials outlined in surroundings variables or configuration.

Closing Ideas

We transformed a stdio primarily based MCP server right into a publicly accessible API endpoint on Clarifai. Its instruments can now be found and invoked by any LLM that helps operate calling.

This method permits you to transfer MCP servers from native growth into steady, shareable infrastructure with out altering their core implementation. If a server runs over stdio, it may be packaged, deployed, and uncovered by means of Clarifai.

Now you can deploy your individual MCP servers, join them to your fashions, and lengthen your LLM functions with customized instruments or exterior integrations. For extra examples, discover the runners-examples repository.