Machine‑studying fashions reside organisms—they develop, adapt, and finally degrade. Managing their lifecycle is the distinction between a proof‑of‑idea and a sustainable AI product. This information exhibits you plan, construct, deploy, monitor, and govern fashions whereas tapping into Clarifai’s platform for orchestration, native execution, and generative AI.

Fast Digest—What Does This Information Cowl?

- Definition & Significance: Perceive what ML lifecycle administration means and why it issues.

- Planning & Information: Discover ways to outline enterprise issues and gather and put together knowledge.

- Improvement & Deployment: See practice, consider and deploy fashions.

- Monitoring & Governance: Uncover methods for monitoring, drift detection and compliance.

- Superior Matters: Dive into LLMOps, edge deployments and rising developments.

- Actual‑World Tales: Discover case research highlighting successes and classes.

What Is ML Lifecycle Administration?

Fast Abstract: What does the ML lifecycle entail?

- ML lifecycle administration covers the entire journey of a mannequin, from drawback framing and knowledge engineering to deployment, monitoring and decommissioning. It treats knowledge, fashions and code as co‑evolving artifacts and ensures they continue to be dependable, compliant and useful over time.

Understanding the Full Lifecycle

Each machine‑studying (ML) undertaking travels by means of a number of phases that usually overlap and iterate. The lifecycle begins with clearly defining the issue, transitions into gathering and getting ready knowledge, strikes on to mannequin choice and coaching, and culminates in deploying fashions into manufacturing environments. Nevertheless, the journey doesn’t finish there—steady monitoring, retraining and governance are important to making sure the mannequin continues to ship worth.

A nicely‑managed lifecycle supplies many advantages:

- Predictable efficiency: Structured processes scale back advert‑hoc experiments and inconsistent outcomes.

- Decreased technical debt: Documentation and model management stop fashions from changing into black bins.

- Regulatory compliance: Governance mechanisms make sure that the mannequin’s choices are explainable and auditable.

- Operational effectivity: Automation and orchestration reduce down deployment cycles and upkeep prices.

Professional Insights

- Holistic view: Specialists emphasize that lifecycle administration integrates knowledge pipelines, mannequin engineering and software program integration, treating them as inseparable items of a product.

- Agile iterations: Leaders advocate iterative cycles – small experiments, fast suggestions and common changes.

- Compliance by design: Compliance isn’t an afterthought; incorporate moral and authorized concerns from the strategy planning stage.

How Do You Plan and Outline Your ML Undertaking?

Fast Abstract: Why is planning important for ML success?

- Efficient ML tasks begin with a transparent drawback definition, detailed aims and agreed‑upon success metrics. With out alignment on enterprise targets, fashions might clear up the improper drawback or produce outputs that aren’t actionable.

Laying a Robust Basis

Earlier than you contact code or knowledge, ask why the mannequin is required. Collaboration with stakeholders is important right here:

- Establish stakeholders and their aims. Perceive who will use the mannequin and the way its outputs will affect choices.

- Outline success standards. Set measurable key efficiency indicators (KPIs) equivalent to accuracy, recall, ROI or buyer satisfaction.

- Define constraints and dangers. Take into account moral boundaries, regulatory necessities and useful resource limitations.

- Translate enterprise targets into ML duties. Body the issue in ML phrases (classification, regression, advice) whereas documenting assumptions.

Inventive Instance – Predictive Upkeep in Manufacturing

Think about a manufacturing unit desires to cut back downtime by predicting machine failures. Stakeholders (plant managers, upkeep groups, knowledge scientists) meet to outline the objective: stop surprising breakdowns. They agree on success metrics like “scale back downtime by 30 %” and set constraints equivalent to “no further sensors”. This clear planning ensures the next knowledge assortment and modeling efforts are aligned.

Professional Insights

- Stakeholder interviews: Contain not simply executives but in addition frontline operators; they typically supply useful context.

- Doc assumptions: Document what you assume is true about the issue (e.g., knowledge availability, label high quality) so you’ll be able to revisit later.

- Alignment prevents scope creep: An outlined scope retains the workforce targeted and prevents pointless options.

The best way to Engineer and Put together Information for ML?

Fast Abstract: What are the core steps in knowledge engineering?

- Information engineering contains ingestion, exploration, validation, cleansing, labeling and splitting. These steps make sure that uncooked knowledge turns into a dependable, structured dataset prepared for modeling.

Information Ingestion & Integration

The primary job is gathering knowledge from numerous sources – databases, APIs, logs, sensors or third‑get together feeds. Use frameworks like Spark or HDFS for big datasets, and doc the place each bit of knowledge comes from. Take into account producing artificial knowledge if sure lessons are uncommon.

Exploration & Validation

As soon as knowledge is ingested, profile it to grasp distributions and detect anomalies. Compute statistics like imply, variance and cardinality; construct histograms and correlation matrices. Validate knowledge with guidelines: test for lacking values, out‑of‑vary numbers or duplicate entries.

Information Cleansing & Wrangling

Cleansing knowledge entails fixing errors, imputing lacking values and standardizing codecs. Strategies vary from easy (imply imputation) to superior (time‑conscious imputation for sequences). Standardize categorical values (e.g., unify “USA,” “United States,” “U.S.”) to keep away from fragmentation.

Labeling & Splitting

Label every knowledge level with the proper final result, a job typically requiring human experience. Use annotation instruments or Clarifai’s AI Lake to streamline labeling. After labeling, break up the dataset into coaching, validation and take a look at units. Use stratified sampling to protect class distributions.

Professional Insights

- Information high quality > Mannequin complexity: A easy algorithm on clear knowledge typically outperforms a fancy algorithm on messy knowledge.

- Iterative method: Information engineering is never one‑and‑completed. Plan for a number of passes as you uncover new points.

- Documentation issues: Monitor each transformation – regulators might require lineage logs for auditing.

The best way to Carry out EDA and Characteristic Engineering?

Fast Abstract: Why do you want EDA and have engineering?

- Exploratory knowledge evaluation (EDA) uncovers patterns and anomalies that information mannequin design, whereas characteristic engineering transforms uncooked knowledge into significant inputs.

Exploratory Information Evaluation (EDA)

Begin by visualizing distributions utilizing histograms, scatter plots and field plots. Search for skewness, outliers and relationships between variables. Uncover patterns like seasonality or clusters; establish potential knowledge leakage or mislabeled information. Generate hypotheses: for instance, “Does climate have an effect on buyer demand?”

Characteristic Engineering & Choice

Characteristic engineering is the artwork of creating new variables that seize underlying alerts. Frequent methods embrace:

- Combining variables (e.g., ratio of clicks to impressions).

- Remodeling variables (log, sq. root, exponential).

- Encoding categorical values (one‑scorching encoding, goal encoding).

- Aggregating over time (rolling averages, time since final buy).

After producing options, choose essentially the most informative ones utilizing statistical exams, tree‑based mostly characteristic significance or L1 regularization.

Inventive Instance – Characteristic Engineering in Finance

Take into account a credit score‑scoring mannequin. Past earnings and credit score historical past, engineers create a “credit score utilization ratio”, capturing the share of credit score in use relative to the restrict. Additionally they compute “time since final delinquent fee” and “variety of inquiries up to now six months.” These engineered options typically have stronger predictive energy than uncooked variables.

Professional Insights

- Area experience pays dividends: Collaborate with topic‑matter consultants to craft options that seize area nuances.

- Much less is extra: A smaller set of excessive‑high quality options typically outperforms a big however noisy set.

- Watch out for leakage: Don’t use future data (e.g., final fee final result) when coaching your mannequin.

The best way to Develop, Experiment and Practice ML Fashions?

Fast Abstract: What are the important thing steps in mannequin improvement?

- Mannequin improvement entails choosing algorithms, coaching them iteratively, evaluating efficiency and tuning hyperparameters. Packaging fashions into moveable codecs (e.g., ONNX) facilitates deployment.

Deciding on Algorithms

Select fashions that suit your knowledge sort and drawback:

- Structured knowledge: Logistic regression, determination bushes, gradient boosting.

- Sequential knowledge: Recurrent neural networks, transformers.

- Photos and video: Convolutional neural networks (CNNs).

Begin with easy fashions to ascertain baselines, then progress to extra advanced architectures if wanted.

Coaching & Hyperparameter Tuning

Coaching entails feeding labeled knowledge into your mannequin, optimizing a loss operate through algorithms like gradient descent. Use cross‑validation to keep away from overfitting and consider totally different hyperparameter settings. Instruments like Optuna or hyperopt automate search throughout hyperparameters.

Analysis & Tuning

Consider fashions utilizing acceptable metrics:

- Classification: Accuracy, precision, recall, F1 rating, AUC.

- Regression: Imply Absolute Error (MAE), Root Imply Squared Error (RMSE).

Tune hyperparameters iteratively – alter studying charges, regularization parameters or structure depth till efficiency plateaus.

Packaging for Deployment

As soon as skilled, export your mannequin to a standardized format like ONNX or PMML. Model the mannequin and its metadata (coaching knowledge, hyperparameters) to make sure reproducibility.

Professional Insights

- No free lunch: Advanced fashions can overfit; at all times benchmark in opposition to easier baselines.

- Equity & bias: Consider your mannequin throughout demographic teams and implement mitigation if wanted.

- Experiment monitoring: Use instruments like Clarifai’s constructed‑in monitoring or MLflow to log hyperparameters, metrics and artifacts.

The best way to Deploy and Serve Your Mannequin?

Fast Abstract: What are the perfect practices for deployment?

- Deployment transforms a skilled mannequin into an operational service. Select the fitting serving sample (batch, actual‑time or streaming) and leverage containerization and orchestration instruments to make sure scalability and reliability.

Deployment Methods

- Batch inference: Appropriate for offline analytics; run predictions on a schedule and write outcomes to storage.

- Actual‑time inference: Deploy fashions as microservices accessible through REST/gRPC APIs to offer speedy predictions.

- Streaming inference: Course of steady knowledge streams (e.g., Kafka matters) and replace fashions incessantly.

Infrastructure & Orchestration

Package deal your mannequin in a container (Docker) and deploy it on a platform like Kubernetes. Implement autoscaling to deal with various masses and guarantee resilience. For serverless deployments, think about chilly‑begin latency.

Testing & Rollbacks

Earlier than going stay, carry out integration exams to make sure the mannequin works throughout the bigger software. Use blue/inexperienced deployment or canary launch methods to roll out updates incrementally and roll again if points come up.

Professional Insights

- Mannequin efficiency monitoring: Even after deployment, efficiency might fluctuate on account of altering knowledge; see the monitoring part subsequent.

- Infrastructure as code: Use Terraform or CloudFormation to outline your deployment surroundings, making certain consistency throughout levels.

- Clarifai’s edge: Deploy fashions utilizing Clarifai’s compute orchestration platform to handle assets throughout cloud, on‑prem and edge.

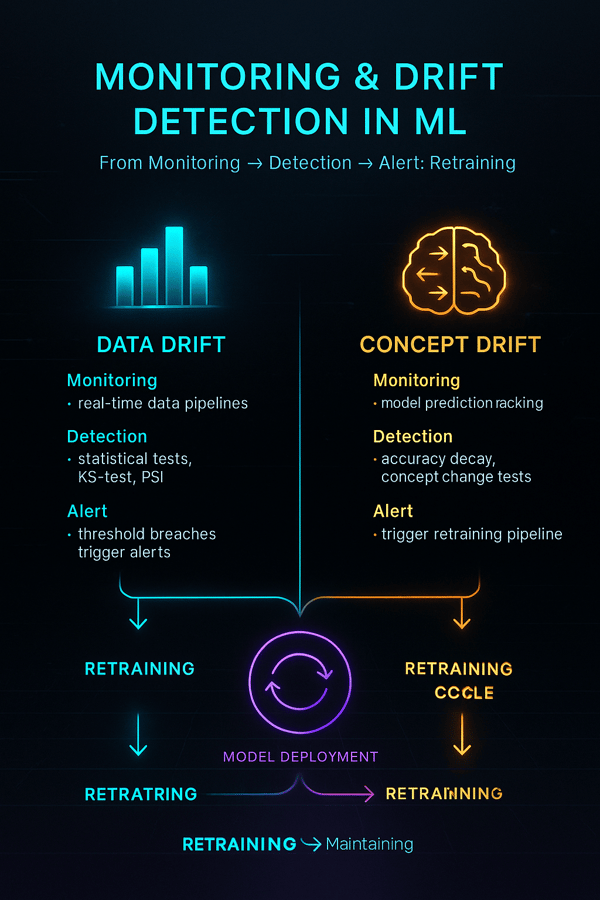

The best way to Monitor Fashions and Handle Drift?

Fast Abstract: Why is monitoring important?

- Fashions degrade over time on account of knowledge drift, idea drift and modifications within the surroundings. Steady monitoring tracks efficiency, detects drift and triggers retraining.

Monitoring Metrics

- Useful efficiency: Monitor metrics like accuracy, precision, recall or MAE on actual‑world knowledge.

- Operational efficiency: Monitor latency, throughput and useful resource utilization.

- Drift detection: Measure variations between coaching knowledge distribution and incoming knowledge. Instruments like Evidently AI and NannyML excel at detecting common drift and pinpointing drift timing respectively.

Alerting & Retraining

Set thresholds for metrics; set off alerts and remedial actions when thresholds are breached. Automate retraining pipelines so the mannequin adapts to new knowledge patterns.

Inventive Instance – E‑commerce Demand Forecasting

A retailer’s demand‑forecasting mannequin suffers a drop in accuracy after a significant advertising and marketing marketing campaign. Monitoring picks up the information drift and triggers retraining with publish‑marketing campaign knowledge. This well timed retraining prevents stockouts and overstock points, saving thousands and thousands.

Professional Insights

- Amazon’s lesson: In the course of the COVID‑19 pandemic, Amazon’s provide‑chain fashions failed on account of surprising demand spikes – a cautionary story on the significance of drift detection.

- Complete monitoring: Monitor each enter distributions and prediction outputs for a whole image.

- Clarifai’s dashboard: Clarifai’s Mannequin Efficiency Dashboard visualizes drift, efficiency degradation and equity metrics.

Why Do Mannequin Governance and Threat Administration Matter?

Fast Abstract: What’s mannequin governance?

- Mannequin governance ensures that fashions are clear, accountable and compliant. It encompasses processes that management entry, doc lineage and align fashions with authorized necessities.

Governance & Compliance

Mannequin governance integrates with MLOps by overlaying six phases: enterprise understanding, knowledge engineering, mannequin engineering, high quality assurance, deployment and monitoring. It enforces entry management, documentation and auditing to fulfill regulatory necessities.

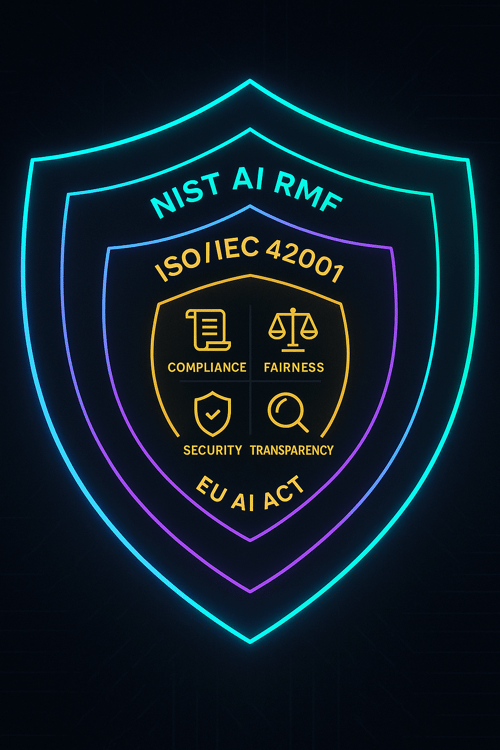

Regulatory Frameworks

- EU AI Act: Classifies AI programs into danger classes. Excessive‑danger programs should fulfill strict documentation, transparency and human oversight necessities.

- NIST AI RMF: Suggests features (Govern, Map, Measure, Handle) that organizations ought to carry out all through the AI lifecycle.

- ISO/IEC 42001: An rising normal that can specify AI administration system necessities.

Implementing Governance

Set up roles and obligations, separate mannequin builders from validators, and create an AI board involving authorized, technical and ethics consultants. Doc coaching knowledge sources, characteristic choice, mannequin assumptions and analysis outcomes.

Professional Insights

- Complete information: Holding detailed information of mannequin choices and interactions helps in investigations and audits.

- Moral AI: Governance isn’t just about compliance – it ensures that AI programs align with organizational values and social expectations.

- Clarifai’s instruments: Clarifai’s Management Middle gives granular permission controls and SOC2/ISO 27001 compliance out of the field, easing governance burdens.

The best way to Guarantee Reproducibility and Monitor Experiments?

Fast Abstract: Why is reproducibility necessary?

- Reproducibility ensures that fashions will be persistently rebuilt and audited. Experiment monitoring centralizes metrics and artifacts for comparability and collaboration.

Model Management & Information Lineage

Use Git for code and DVC (Information Model Management) or Git‑LFS for big datasets. Log random seeds, surroundings variables and library variations to keep away from non‑deterministic outcomes. Preserve transformation scripts underneath model management.

Experiment Monitoring

Instruments like MLflow, Neptune.ai or Clarifai’s constructed‑in tracker allow you to log hyperparameters, metrics, artifacts and surroundings particulars, and tag experiments for simple retrieval. Use dashboards to match runs and determine which fashions to advertise.

Mannequin Registry

A mannequin registry is a centralized retailer for fashions and their metadata. It tracks variations, efficiency, stage (staging, manufacturing), and references to knowledge and code. In contrast to object storage, a registry supplies context and helps rollbacks.

Professional Insights

- Reproducibility is non‑negotiable for regulated industries; auditors might request to breed a prediction made years in the past.

- Tags and naming conventions: Use constant naming patterns for experiments to keep away from confusion.

- Clarifai’s benefit: Clarifai’s platform integrates experiment monitoring and mannequin registry, so fashions transfer seamlessly from improvement to deployment.

The best way to Automate Your ML Lifecycle?

Fast Abstract: What function does automation play in MLOps?

- Automation streamlines repetitive duties, accelerates releases and reduces human error. CI/CD pipelines, steady coaching and infrastructure‑as‑code are key mechanisms.

CI/CD for Machine Studying

Undertake steady integration and supply pipelines:

- Steady integration: Automate code exams, knowledge validation and static evaluation on each commit.

- Steady supply: Automate deployment of fashions to staging environments.

- Steady coaching: Set off coaching jobs robotically when new knowledge arrives or drift is detected.

Infrastructure‑as‑Code & Orchestration

Outline infrastructure (compute, networking, storage) utilizing Terraform or CloudFormation to make sure constant and repeatable environments. Use Kubernetes to orchestrate containers and implement autoscaling.

Clarifai Integration

Clarifai’s compute orchestration simplifies automation: you’ll be able to deploy your fashions wherever (cloud, on‑prem or edge) and scale them robotically. Native runners allow you to take a look at or run fashions offline utilizing the identical API, making CI/CD pipelines extra strong.

Professional Insights

- Automate exams: ML pipelines want exams past unit exams – embrace checks for knowledge schema and distribution.

- Small increments: Deploying small modifications extra incessantly reduces danger.

- Self‑therapeutic pipelines: Construct pipelines that react to float detection by robotically retraining and redeploying.

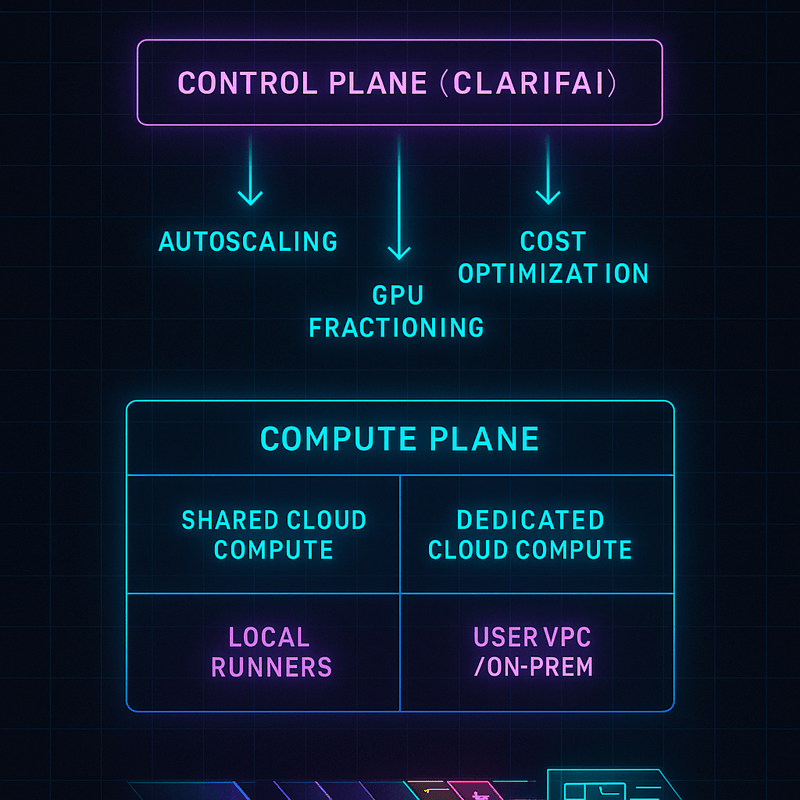

The best way to Orchestrate Compute Sources Successfully?

Fast Abstract: What’s compute orchestration and why is it necessary?

- Compute orchestration manages the allocation and scaling of {hardware} assets (CPU, GPU, reminiscence) throughout totally different environments (cloud, on‑prem, edge). It optimizes value, efficiency and reliability.

Hybrid Deployment Choices

Organizations can select from:

- Shared cloud: Pay‑as‑you‑go compute assets managed by suppliers.

- Devoted cloud: Devoted environments for predictable efficiency.

- On‑premise: For knowledge sovereignty or latency necessities.

- Edge: For actual‑time inference close to knowledge sources.

Clarifai’s Hybrid Platform

Clarifai’s platform gives a unified management airplane the place you’ll be able to orchestrate workloads throughout shared compute, devoted environments and your personal VPC or edge {hardware}. Autoscaling and price optimization options assist proper‑measurement compute and allocate assets dynamically.

Value Optimization Methods

- Proper‑measurement cases: Select occasion sorts matching workload calls for.

- Spot cases: Scale back prices by utilizing spare capability at discounted charges.

- Scheduling: Run compute‑intensive duties throughout off‑peak hours to save lots of on electrical energy and cloud charges.

Professional Insights

- Useful resource monitoring: Constantly monitor useful resource utilization to keep away from idle capability.

- MIG (Multi‑Occasion GPU): Partition GPUs to run a number of fashions concurrently, bettering utilization.

- Clarifai’s native runners preserve compute native to cut back latency and cloud prices.

The best way to Deploy Fashions on the Edge and On‑Gadget?

Fast Abstract: What are edge deployments and when are they helpful?

- Edge deployments run fashions on gadgets near the place knowledge is generated, lowering latency and preserving privateness. They’re preferrred for IoT, cellular and distant environments.

Why Edge?

Edge inference avoids spherical‑journey latency to the cloud and ensures fashions proceed to function even when connectivity is intermittent. It additionally retains delicate knowledge native, which can be essential for regulated industries.

Instruments and Frameworks

- TensorFlow Lite, ONNX Runtime and Core ML allow fashions to run on cell phones and embedded gadgets.

- {Hardware} acceleration: Units like NVIDIA Jetson or smartphone NPUs present the processing energy wanted for inference.

- Resilient updates: Use over‑the‑air updates with rollback to make sure reliability.

Clarifai’s Edge Options

Clarifai’s native runners ship constant APIs throughout cloud and edge and may run on gadgets like Jetson. They assist you to take a look at regionally and deploy seamlessly with minimal code modifications.

Professional Insights

- Mannequin measurement issues: Compress fashions through quantization or pruning to suit on useful resource‑constrained gadgets.

- Information seize: Acquire telemetry from edge gadgets to enhance fashions over time.

- Connectivity planning: Implement caching and asynchronous syncing to deal with community outages.

What Is LLMOps and The best way to Deal with Generative AI?

Fast Abstract: How is LLMOps totally different from MLOps?

- LLMOps applies lifecycle administration to giant language fashions (LLMs) and generative AI, addressing distinctive challenges like immediate administration, privateness and hallucination detection.

The Rise of Generative AI

Massive language fashions (LLMs) like GPT‑household and Claude can generate textual content, code and even photos. Managing these fashions requires specialised practices:

- Mannequin choice: Consider open fashions and select one that matches your area.

- Customisation: High-quality‑tune or immediate‑engineer the mannequin on your particular job.

- Information privateness: Use pseudonymisation or anonymisation to guard delicate knowledge.

- Retrieval‑Augmented Era (RAG): Mix LLMs with vector databases to fetch correct details whereas protecting proprietary knowledge off the mannequin’s coaching corpus.

Immediate Administration & Analysis

- Immediate repositories: Retailer and model prompts similar to code.

- Guardrails: Monitor outputs for hallucinations, toxicity or bias. Use instruments like Clarifai’s generative AI analysis service to measure and mitigate points.

Clarifai’s Generative AI Providing

Clarifai supplies pre‑skilled textual content and picture technology fashions with APIs for simple integration. Their platform means that you can advantageous‑tune prompts and consider generative output with constructed‑in guardrails.

Professional Insights

- LLMs will be unpredictable: At all times take a look at prompts throughout numerous inputs.

- Moral concerns: LLMs can produce dangerous or biased content material; implement filters and oversight mechanisms.

- LLM value: Generative fashions require substantial compute. Utilizing Clarifai’s hybrid compute orchestration helps you handle prices whereas leveraging the newest fashions.

Why Is Collaboration Important for MLOps?

Fast Abstract: How do groups collaborate in MLOps?

- MLOps is inherently cross‑useful, requiring cooperation between knowledge scientists, ML engineers, operations groups, product house owners and area consultants. Efficient collaboration hinges on communication, shared instruments and mutual understanding.

Constructing Cross‑Useful Groups

- Roles & Duties: Outline roles clearly (knowledge engineer, ML engineer, MLOps engineer, area professional).

- Shared Documentation: Preserve documentation of datasets, characteristic definitions and mannequin assumptions in collaborative platforms (Confluence, Notion).

- Communication Rituals: Conduct every day stand‑ups, weekly syncs and retrospectives to align aims.

Early Involvement of Area Specialists

Area consultants must be a part of planning, characteristic engineering and analysis phases to catch errors and add context. Encourage them to evaluation mannequin outputs and spotlight anomalies.

Professional Insights

- Psychological security: Foster an surroundings the place workforce members can query assumptions with out concern.

- Coaching: Encourage cross‑coaching – engineers study area context; area consultants acquire ML literacy.

- Clarifai’s Group: Clarifai gives group boards and help channels to assist groups collaborate and get professional assist.

What Do Actual‑World Case Research Train Us?

Fast Abstract: What classes come from actual deployments?

- Actual‑world case research reveal the significance of monitoring, edge deployment and preparedness for drift. They spotlight how Clarifai’s platform accelerates success.

Experience‑Sharing – Dealing with Climate‑Pushed Drift

A experience‑sharing firm monitored journey‑time predictions utilizing Clarifai’s dashboard. When heavy rain induced uncommon journey patterns, drift detection flagged the change. An automatic retraining job up to date the mannequin with the brand new knowledge, stopping inaccurate ETAs and sustaining person belief.

Manufacturing – Edge Monitoring of Machines

A manufacturing unit deployed a pc‑imaginative and prescient mannequin to detect gear anomalies. Utilizing Clarifai’s native runner on Jetson gadgets, they achieved actual‑time inference with out sending video to the cloud. Evening‑time updates ensured the mannequin stayed present with out disrupting manufacturing.

Provide Chain – Penalties of Ignoring Drift

Throughout COVID‑19, Amazon’s provide‑chain prediction algorithms failed on account of unprecedented demand spikes for family items, resulting in bottlenecks. The lesson: incorporate excessive eventualities into danger administration and monitor for surprising drifts.

Benchmarking Drift Detection Instruments

Researchers evaluated open‑supply drift instruments and located Evidently AI greatest for common drift detection and NannyML for pinpointing drift timing. Choosing the proper instrument depends upon your use case.

Professional Insights

- Monitoring pays off: Early detection and retraining saved the experience‑sharing and manufacturing firms from pricey errors.

- Edge vs cloud: Edge deployments reduce latency however require robust replace mechanisms.

- Software choice: Consider instruments for performance, scalability, and integration ease.

What Future Developments Will Form ML Lifecycle Administration?

Fast Abstract: Which developments must you watch?

- Accountable AI frameworks (NIST AI RMF, EU AI Act) and requirements (ISO/IEC 42001) will form governance, whereas LLMOps, federated studying, and AutoML will remodel improvement.

Accountable AI & Regulation

The NIST AI RMF encourages organizations to govern, map, measure and handle AI dangers. The EU AI Act categorizes programs by danger and would require excessive‑danger fashions to move conformity assessments. ISO/IEC 42001 is in improvement to standardize AI administration.

LLMOps & Generative AI

As generative fashions proliferate, LLMOps will develop into important. Anticipate new instruments for immediate administration, equity auditing and generative content material identification.

Federated Studying & Privateness

Federated studying will allow collaborative coaching throughout a number of gadgets with out sharing uncooked knowledge, boosting privateness and complying with rules. Differential privateness and safe aggregation will additional defend delicate data.

Low‑Code/AutoML & Citizen Information Scientists

AutoML platforms will democratize mannequin improvement, enabling non‑consultants to construct fashions. Nevertheless, organizations should steadiness automation with governance and oversight.

Analysis Gaps & Alternatives

A scientific mapping examine highlights that few analysis papers sort out deployment, upkeep and high quality assurance. This hole gives alternatives for innovation in MLOps tooling and methodology.

Professional Insights

- Keep adaptable: Rules will evolve; construct versatile governance and compliance processes.

- Put money into schooling: Equip your workforce with data of ethics, legislation and rising applied sciences.

- Clarifai’s roadmap: Clarifai continues to combine rising practices (e.g., RAG, generative AI guardrails) into its platform, making it simpler to undertake future developments.

Conclusion – The best way to Get Began and Succeed

Managing the ML lifecycle is a marathon, not a dash. By planning fastidiously, getting ready knowledge meticulously, experimenting responsibly, deploying robustly, monitoring repeatedly and governing ethically, you set the stage for lengthy‑time period success. Clarifai’s hybrid AI platform gives instruments for orchestration, native execution, mannequin registry, generative AI and equity auditing, making it simpler to undertake greatest practices and speed up time to worth.

Actionable Subsequent Steps

- Audit your workflow: Establish gaps in model management, knowledge high quality or monitoring.

- Implement knowledge pipelines: Automate ingestion, validation and cleansing.

- Monitor experiments: Use an experiment tracker and mannequin registry.

- Automate CI/CD: Construct pipelines that take a look at, practice and deploy fashions repeatedly.

- Monitor & retrain: Arrange drift detection and automatic retraining triggers.

- Put together for compliance: Doc knowledge sources, options and analysis metrics; undertake frameworks like NIST AI RMF.

- Discover Clarifai: Leverage Clarifai’s compute orchestration, native runners and generative AI instruments to simplify infrastructure and speed up innovation.

Steadily Requested Questions

Q1: How incessantly ought to fashions be retrained?

Retraining frequency depends upon knowledge drift and enterprise necessities. Use monitoring to detect when efficiency drops beneath acceptable thresholds and set off retraining.

Q2: What differentiates MLOps from LLMOps?

MLOps manages any machine‑studying mannequin’s lifecycle, whereas LLMOps focuses on giant language fashions, including challenges like immediate administration, privateness preservation and hallucination detection.

Q3: Are edge deployments at all times higher?

No. Edge deployments scale back latency and enhance privateness, however they require light-weight fashions and strong replace mechanisms. Use them when latency, bandwidth or privateness calls for outweigh the complexity.

This autumn: How do mannequin registries enhance reproducibility?

Mannequin registries retailer variations, metadata and deployment standing, making it straightforward to roll again or examine fashions; object storage alone lacks this context.

Q5: What does Clarifai supply past open‑supply instruments?

Clarifai supplies finish‑to‑finish options, together with compute orchestration, native runners, experiment monitoring, generative AI instruments and equity audits, mixed with enterprise‑grade safety and help