Introduction

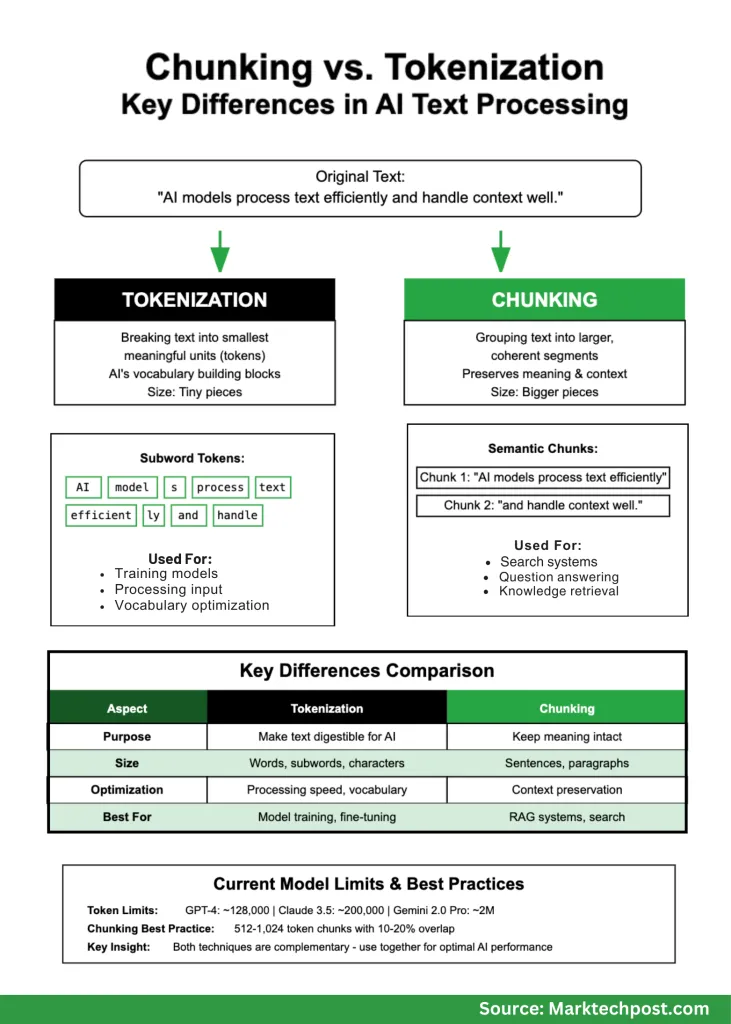

Whenever you’re working with AI and pure language processing, you’ll rapidly encounter two elementary ideas that usually get confused: tokenization and chunking. Whereas each contain breaking down textual content into smaller items, they serve utterly completely different functions and work at completely different scales. In the event you’re constructing AI purposes, understanding these variations isn’t simply tutorial—it’s essential for creating programs that truly work properly.

Consider it this fashion: when you’re making a sandwich, tokenization is like slicing your components into bite-sized items, whereas chunking is like organizing these items into logical teams that make sense to eat collectively. Each are vital, however they resolve completely different issues.

What’s Tokenization?

Tokenization is the method of breaking textual content into the smallest significant items that AI fashions can perceive. These items, known as tokens, are the fundamental constructing blocks that language fashions work with. You’ll be able to consider tokens because the “phrases” in an AI’s vocabulary, although they’re typically smaller than precise phrases.

There are a number of methods to create tokens:

Phrase-level tokenization splits textual content at areas and punctuation. It’s easy however creates issues with uncommon phrases that the mannequin has by no means seen earlier than.

Subword tokenization is extra subtle and extensively used at the moment. Strategies like Byte Pair Encoding (BPE), WordPiece, and SentencePiece break phrases into smaller chunks primarily based on how regularly character combos seem in coaching information. This method handles new or uncommon phrases a lot better.

Character-level tokenization treats every letter as a token. It’s easy however creates very lengthy sequences which can be more durable for fashions to course of effectively.

Right here’s a sensible instance:

- Unique textual content: “AI fashions course of textual content effectively.”

- Phrase tokens: [“AI”, “models”, “process”, “text”, “efficiently”]

- Subword tokens: [“AI”, “model”, “s”, “process”, “text”, “efficient”, “ly”]

Discover how subword tokenization splits “fashions” into “mannequin” and “s” as a result of this sample seems regularly in coaching information. This helps the mannequin perceive associated phrases like “modeling” or “modeled” even when it hasn’t seen them earlier than.

What’s Chunking?

Chunking takes a very completely different method. As a substitute of breaking textual content into tiny items, it teams textual content into bigger, coherent segments that protect that means and context. Whenever you’re constructing purposes like chatbots or search programs, you want these bigger chunks to take care of the circulate of concepts.

Take into consideration studying a analysis paper. You wouldn’t need every sentence scattered randomly—you’d need associated sentences grouped collectively so the concepts make sense. That’s precisely what chunking does for AI programs.

Right here’s the way it works in follow:

- Unique textual content: “AI fashions course of textual content effectively. They depend on tokens to seize that means and context. Chunking permits higher retrieval.”

- Chunk 1: “AI fashions course of textual content effectively.”

- Chunk 2: “They depend on tokens to seize that means and context.”

- Chunk 3: “Chunking permits higher retrieval.”

Trendy chunking methods have develop into fairly subtle:

Mounted-length chunking creates chunks of a particular measurement (like 500 phrases or 1000 characters). It’s predictable however typically breaks up associated concepts awkwardly.

Semantic chunking is smarter—it appears to be like for pure breakpoints the place matters change, utilizing AI to grasp when concepts shift from one idea to a different.

Recursive chunking works hierarchically, first making an attempt to separate at paragraph breaks, then sentences, then smaller items if wanted.

Sliding window chunking creates overlapping chunks to make sure essential context isn’t misplaced at boundaries.

The Key Variations That Matter

Understanding when to make use of every method makes all of the distinction in your AI purposes:

| What You’re Doing | Tokenization | Chunking |

|---|---|---|

| Measurement | Tiny items (phrases, elements of phrases) | Larger items (sentences, paragraphs) |

| Purpose | Make textual content digestible for AI fashions | Maintain that means intact for people and AI |

| When You Use It | Coaching fashions, processing enter | Search programs, query answering |

| What You Optimize For | Processing pace, vocabulary measurement | Context preservation, retrieval accuracy |

Why This Issues for Actual Functions

For AI Mannequin Efficiency

Whenever you’re working with language fashions, tokenization immediately impacts how a lot you pay and how briskly your system runs. Fashions like GPT-4 cost by the token, so environment friendly tokenization saves cash. Present fashions have completely different limits:

- GPT-4: Round 128,000 tokens

- Claude 3.5: As much as 200,000 tokens

- Gemini 2.0 Professional: As much as 2 million tokens

Latest analysis exhibits that bigger fashions really work higher with greater vocabularies. For instance, whereas LLaMA-2 70B makes use of about 32,000 completely different tokens, it might most likely carry out higher with round 216,000. This issues as a result of the correct vocabulary measurement impacts each efficiency and effectivity.

For Search and Query-Answering Programs

Chunking technique could make or break your RAG (Retrieval-Augmented Era) system. In case your chunks are too small, you lose context. Too large, and also you overwhelm the mannequin with irrelevant data. Get it proper, and your system supplies correct, useful solutions. Get it improper, and also you get hallucinations and poor outcomes.

Firms constructing enterprise AI programs have discovered that good chunking methods considerably scale back these irritating circumstances the place AI makes up information or provides nonsensical solutions.

The place You’ll Use Every Strategy

Tokenization is Important For:

Coaching new fashions – You’ll be able to’t practice a language mannequin with out first tokenizing your coaching information. The tokenization technique impacts all the things about how properly the mannequin learns.

Wonderful-tuning current fashions – Whenever you adapt a pre-trained mannequin to your particular area (like medical or authorized textual content), you could fastidiously take into account whether or not the prevailing tokenization works to your specialised vocabulary.

Cross-language purposes – Subword tokenization is especially useful when working with languages which have complicated phrase constructions or when constructing multilingual programs.

Chunking is Important For:

Constructing firm data bases – Whenever you need staff to ask questions and get correct solutions out of your inner paperwork, correct chunking ensures the AI retrieves related, full data.

Doc evaluation at scale – Whether or not you’re processing authorized contracts, analysis papers, or buyer suggestions, chunking helps keep doc construction and that means.

Search programs – Trendy search goes past key phrase matching. Semantic chunking helps programs perceive what customers actually need and retrieve probably the most related data.

Present Finest Practices (What Truly Works)

After watching many real-world implementations, right here’s what tends to work:

For Chunking:

- Begin with 512-1024 token chunks for many purposes

- Add 10-20% overlap between chunks to protect context

- Use semantic boundaries when doable (finish of sentences, paragraphs)

- Check along with your precise use circumstances and regulate primarily based on outcomes

- Monitor for hallucinations and tweak your method accordingly

For Tokenization:

- Use established strategies (BPE, WordPiece, SentencePiece) slightly than constructing your personal

- Think about your area—medical or authorized textual content may want specialised approaches

- Monitor out-of-vocabulary charges in manufacturing

- Steadiness between compression (fewer tokens) and that means preservation

Abstract

Tokenization and chunking aren’t competing methods—they’re complementary instruments that resolve completely different issues. Tokenization makes textual content digestible for AI fashions, whereas chunking preserves that means for sensible purposes.

As AI programs develop into extra subtle, each methods proceed evolving. Context home windows are getting bigger, vocabularies have gotten extra environment friendly, and chunking methods are getting smarter about preserving semantic that means.

The bottom line is understanding what you’re making an attempt to perform. Constructing a chatbot? Deal with chunking methods that protect conversational context. Coaching a mannequin? Optimize your tokenization for effectivity and protection. Constructing an enterprise search system? You’ll want each—good tokenization for effectivity and clever chunking for accuracy.