# Introduction

Anybody who has spent a good period of time doing knowledge science could in the end study one thing: the golden rule of downstream machine studying modeling, often called rubbish in, rubbish out (GIGO).

For instance, feeding a linear regression mannequin with extremely collinear knowledge, or working ANOVA assessments on heteroscedastic variances, is the right recipe… for ineffective fashions that will not study correctly.

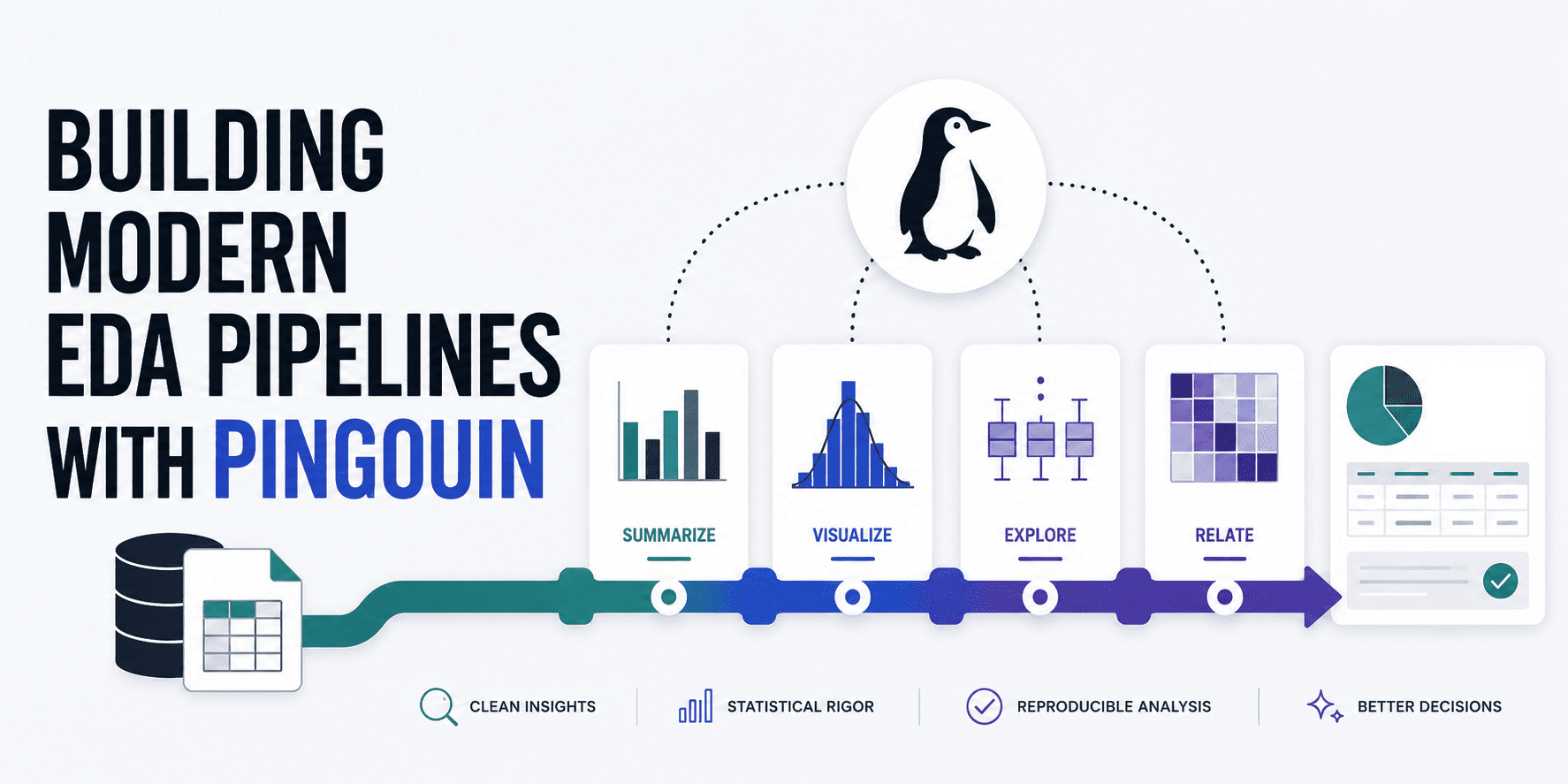

Exploratory knowledge evaluation (EDA) has quite a bit to say by way of visualizations like scatter plots and histograms, but they are not adequate after we want rigorous validation of knowledge in opposition to the mathematical assumptions wanted in downstream analyses or fashions. Pingouin helps do that by bridging the hole between two well-known libraries in knowledge science and statistics: SciPy and pandas. Additional, it may be a terrific ally to construct strong, automated EDA pipelines. This text teaches you methods to construct a holistic pipeline for rigorous, statistical EDA, validating a number of essential knowledge properties.

# Preliminary Setup

Let’s begin by ensuring we set up Pingouin in our Python surroundings (and pandas, in case you do not have it but):

!pip set up pingouin pandas

After that, it is time to import these key libraries and cargo our knowledge. For instance open dataset, we’ll use one containing samples of wine properties and their high quality.

import pandas as pd

import pingouin as pg

# Loading the wine dataset from an open dataset GitHub repository

url = "https://uncooked.githubusercontent.com/gakudo-ai/open-datasets/refs/heads/major/wine-quality-white-and-red.csv"

df = pd.read_csv(url)

# Displaying the primary few rows to know our options

df.head()

# Checking Univariate Normality

The primary of the particular exploratory analyses we’ll conduct pertains to a verify on univariate normality. Many conventional algorithms for coaching machine studying fashions — and statistical assessments like ANOVAs and t-tests, for that matter — want the idea that steady variables comply with a traditional, a.okay.a. Gaussian distribution. Pingouin’s pg.normality() perform helps do that verify by means of a Shapiro-Wilk check throughout the complete dataframe:

# Deciding on a subset of steady options for normality checks

options = ['fixed acidity', 'volatile acidity', 'citric acid', 'pH', 'alcohol']

# Working the normality check

normality_results = pg.normality(df[features])

print(normality_results)

Output:

W pval regular

mounted acidity 0.879789 2.437973e-57 False

unstable acidity 0.875867 6.255995e-58 False

citric acid 0.964977 5.262332e-37 False

pH 0.991448 2.204049e-19 False

alcohol 0.953532 2.918847e-41 False

It looks like not one of the numeric options at hand fulfill normality. That is in no way one thing fallacious with the information; it is merely a part of its traits. We’re simply getting the message that, in later knowledge preprocessing steps past our EDA, we would wish to think about making use of knowledge transformations like log-transform or Field-Cox that make the uncooked knowledge look “extra normal-like” and thus extra appropriate for fashions that assume normality.

# Checking Multivariate Normality

Equally, evaluating normality not function by function, however accounting for the interplay between options, is one other attention-grabbing facet to examine. Let’s have a look at methods to verify for multivariate normality: a key requirement in methods like multivariate ANOVA (MANOVA), for example.

# Henze-Zirkler multivariate normality check

multivariate_normality_results = pg.multivariate_normality(df[features])

print(multivariate_normality_results)

Output:

HZResults(hz=np.float64(23.72107048442373), pval=np.float64(0.0), regular=False)

And guess what: you might get one thing like HZResults(hz=np.float64(23.72107048442373), pval=np.float64(0.0), regular=False), which implies multivariate normality would not maintain both. If you’ll prepare a machine studying mannequin on this dataset, this implies non-parametric, tree-based fashions like gradient boosting and random forests is likely to be a extra sturdy various than parametric, weight-based fashions like SVM, linear regression, and so forth.

# Checking Homoscedasticity

Subsequent comes a difficult phrase for a somewhat easy idea: homoscedasticity. This refers to equal or fixed variance throughout errors in predictions, and it’s interpreted as a measure of reliability. We are going to check this property (sorry, too onerous to jot down its title once more!) with Pingouin’s implementation of Levene’s check, as follows:

# Levene's check for equal variances throughout teams

# 'dv' is the goal, dependent variable, 'group' is the specific variable

homoscedasticity_results = pg.homoscedasticity(knowledge=df, dv='alcohol', group='high quality')

print(homoscedasticity_results)

Outcome:

W pval equal_var

levene 66.338684 2.317649e-80 False

Since we received False as soon as once more, we have now a so-called heteroscedasticity drawback, which needs to be accounted for in downstream analyses. One potential method may very well be by using sturdy commonplace errors when coaching regression fashions.

# Checking Sphericity

One other statistical property to research is sphericity, which identifies whether or not the variances of variations between potential pairwise combos of situations are equal. Testing this property is often fascinating earlier than working principal part evaluation (PCA) for dimensionality discount, because it helps us perceive whether or not there are correlations between variables. PCA can be rendered somewhat ineffective in case there should not any:

# Mauchly's sphericity check

sphericity_results = pg.sphericity(df[features])

print(sphericity_results)

Outcome:

SpherResults(spher=False, W=np.float64(0.004437706589942777), chi2=np.float64(35184.26583883276), dof=9, pval=np.float64(0.0))

Appears like we have now chosen a fairly indomitable, arid dataset! However concern not — this text is deliberately designed to give attention to the EDA course of and assist you establish loads of knowledge points like these. On the finish of the day, detecting them and figuring out what to do about them earlier than downstream, machine studying evaluation is much better than constructing a doubtlessly flawed mannequin. On this case, there’s a catch: we have now a p-value of 0.0, which implies the null speculation of an identification correlation matrix is rejected, i.e. significant correlations exist between the variables. So if we had loads of options and wished to cut back dimensionality, making use of PCA is likely to be a good suggestion.

# Checking Multicollinearity

Final, we’ll verify multicollinearity: a property that signifies whether or not there are extremely correlated predictors. This would possibly turn out to be, in some unspecified time in the future, an undesirable property in interpretable fashions like linear regressors. Let’s verify it:

# Calculating a strong correlation matrix with p-values

correlation_matrix = pg.rcorr(df[features], technique='pearson')

print(correlation_matrix)

Output matrix:

mounted acidity unstable acidity citric acid pH alcohol

mounted acidity - *** *** *** ***

unstable acidity 0.219 - *** *** **

citric acid 0.324 -0.378 - ***

pH -0.253 0.261 -0.33 - ***

alcohol -0.095 -0.038 -0.01 0.121 -

Whereas pandas’ corr() will also be used, Pingouin’s counterpart makes use of asterisks to point the statistical significance stage of every correlation (* for p < 0.05, ** for p < 0.01, and *** for p < 0.001). A correlation could be statistically important but nonetheless small in magnitude — multicollinearity turns into a priority when absolutely the worth of the correlation is excessive (sometimes above 0.8). In our case, not one of the pairwise correlations are dangerously giant, with all 5 evaluated options offering largely non-overlapping, distinctive data of their very own for additional analyses.

# Wrapping Up

By means of a sequence of examples utilized and defined one after the other, we have now seen methods to unleash the potential of Pingouin, an open-source Python library, to carry out sturdy, trendy EDA pipelines that assist you make higher choices in knowledge preprocessing and downstream analyses primarily based on superior statistical assessments or machine studying fashions, serving to you select the precise actions to carry out and the precise fashions to make use of.

Iván Palomares Carrascosa is a pacesetter, author, speaker, and adviser in AI, machine studying, deep studying & LLMs. He trains and guides others in harnessing AI in the true world.