On this article, we’ll give attention to Gated Recurrent Models (GRUs)- a extra easy but highly effective various that’s gained traction for its effectivity and efficiency.

Whether or not you’re new to sequence modeling or trying to sharpen your understanding, this information will clarify how GRUs work, the place they shine, and why they matter in at this time’s deep studying panorama.

In deep studying, not all knowledge arrives in neat, impartial chunks. A lot of what we encounter: language, music, inventory costs, unfolds over time, with every second formed by what got here earlier than. That’s the place sequential knowledge is available in, and with it, the necessity for fashions that perceive context and reminiscence.

Recurrent Neural Networks (RNNs) had been constructed to sort out the problem of working with sequences, making it doable for machines to comply with patterns over time, like how individuals course of language or occasions.

Nonetheless, conventional RNNs are inclined to lose observe of older data, which may result in weaker predictions. That’s why newer fashions like LSTMs and GRUs got here into the image, designed to raised maintain on to related particulars throughout longer sequences.

What are GRUs?

Gated Recurrent Models, or GRUs, are a kind of neural community that helps computer systems make sense of sequences- issues like sentences, time sequence, and even music. Not like customary networks that deal with every enter individually, GRUs bear in mind what got here earlier than, which is essential when context issues.

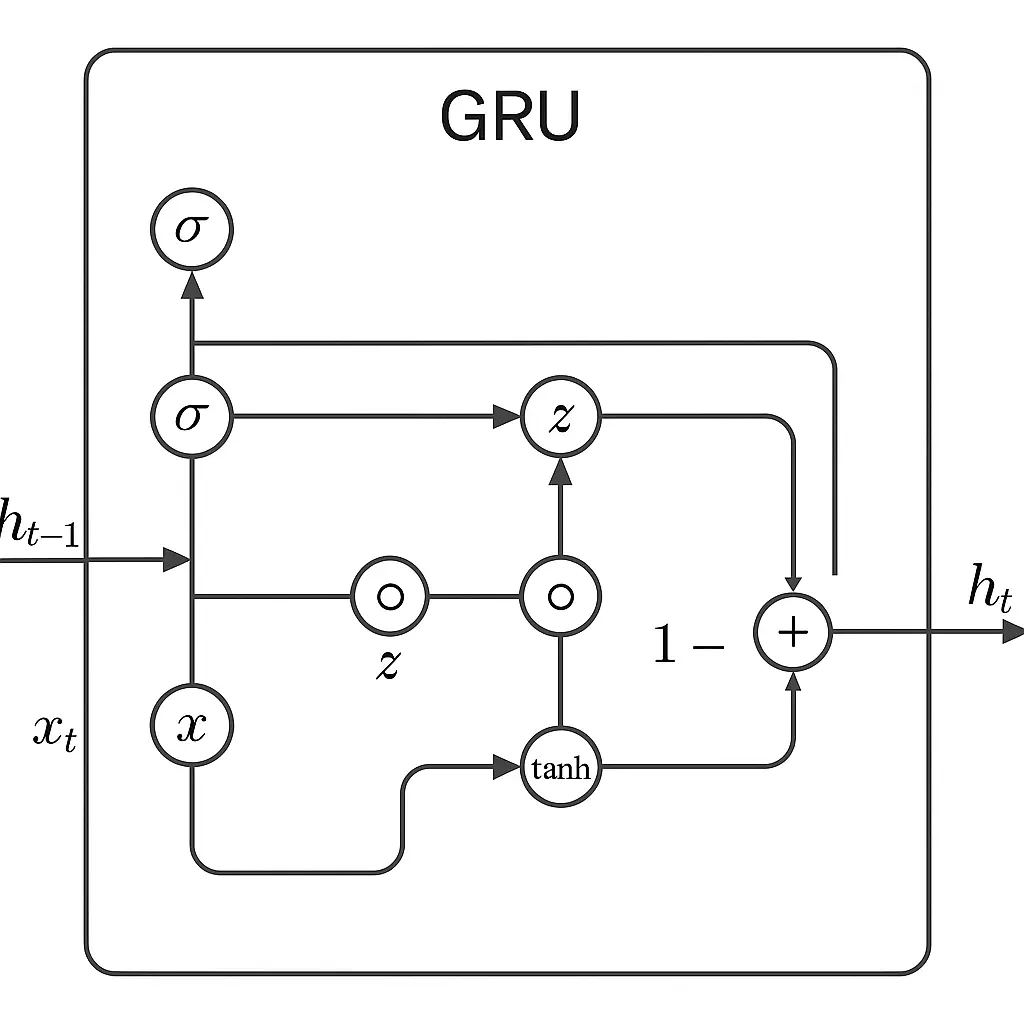

GRUs work by utilizing two foremost “gates” to handle data. The replace gate decides how a lot of the previous needs to be saved round, and the reset gate helps the mannequin work out how a lot of the previous to overlook when it sees new enter.

These gates enable the mannequin to give attention to what’s essential and ignore noise or irrelevant knowledge.

As new knowledge is available in, these gates work collectively to mix the previous and new well. If one thing from earlier within the sequence nonetheless issues, the GRU retains it. If it doesn’t, the GRU lets it go.

This steadiness helps it be taught patterns throughout time with out getting overwhelmed.

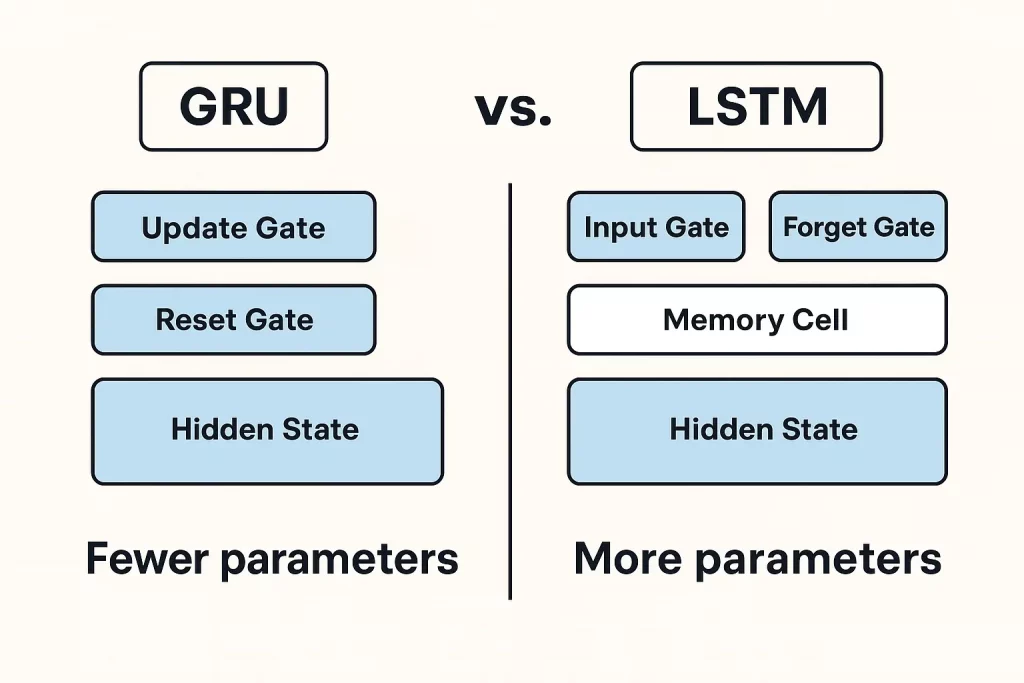

In comparison with LSTMs (Lengthy Quick-Time period Reminiscence), which use three gates and a extra complicated reminiscence construction, GRUs are lighter and sooner. They don’t want as many parameters and are normally faster to coach.

GRUs carry out simply as nicely in lots of instances, particularly when the dataset isn’t large or overly complicated. That makes them a stable alternative for a lot of deep studying duties involving sequences.

Total, GRUs provide a sensible mixture of energy and ease. They’re designed to seize important patterns in sequential knowledge with out overcomplicating issues, which is a top quality that makes them efficient and environment friendly in real-world use.

GRU Equations and Functioning

A GRU cell makes use of just a few key equations to resolve what data to maintain and what to discard because it strikes via a sequence. GRU blends previous and new data primarily based on what the gates resolve. This permits it to retain sensible context over lengthy sequences, serving to the mannequin perceive dependencies that stretch throughout time.

GRU Diagram

Benefits and Limitations of GRUs

Benefits

- GRUs have a status for being each easy and efficient.

- Considered one of their largest strengths is how they deal with reminiscence. They’re designed to carry on to the essential stuff from earlier in a sequence, which helps when working with knowledge that unfolds over time, like language, audio, or time sequence.

- GRUs use fewer parameters than a few of their counterparts, particularly LSTMs. With fewer shifting components, they prepare faster and want much less knowledge to get going. That is nice when brief on computing energy or working with smaller datasets.

- Additionally they are inclined to converge sooner. Which means the coaching course of normally takes much less time to succeed in a very good stage of accuracy. For those who’re in a setting the place quick iteration issues, this could be a actual profit.

Limitations

- In duties the place the enter sequence could be very lengthy or complicated, they could not carry out fairly in addition to LSTMs. LSTMs have an additional reminiscence unit that helps them cope with these deeper dependencies extra successfully.

- GRUs additionally battle with very lengthy sequences. Whereas they’re higher than easy RNNs, they will nonetheless lose observe of data earlier within the enter. That may be a problem in case your knowledge has dependencies unfold far aside, like the start and finish of an extended paragraph.

So, whereas GRUs hit a pleasant steadiness for a lot of jobs, they’re not a common repair. They shine in light-weight, environment friendly setups, however may fall brief when the duty calls for extra reminiscence or nuance.

Functions of GRUs in Actual-World Situations

Gated Recurrent Models (GRUs) are being extensively utilized in a number of real-world functions on account of their skill to course of sequential knowledge.

- In pure language processing (NLP), GRUs assist with duties like machine translation and sentiment evaluation.

- These capabilities are particularly related in sensible NLP initiatives like chatbots, textual content classification, or language era, the place the flexibility to grasp and reply to sequences meaningfully performs a central position.

- In time sequence forecasting, GRUs are particularly helpful for predicting tendencies. Suppose inventory costs, climate updates, or any knowledge that strikes in a timeline

- GRUs can decide up on the patterns and assist make good guesses about what’s coming subsequent.

- They’re designed to hold on to only the correct amount of previous data with out getting slowed down, which helps keep away from frequent coaching points.

- In voice recognition, GRUs assist flip spoken phrases into written ones. Since they deal with sequences nicely, they will regulate to completely different talking kinds and accents, making the output extra dependable.

- Within the medical world, GRUs are getting used to identify uncommon patterns in affected person knowledge, like detecting irregular heartbeats or predicting well being dangers. They’ll sift via time-based information and spotlight issues that docs may not catch instantly.

GRUs and LSTMs are designed to deal with sequential knowledge by overcoming points like vanishing gradients, however they every have their strengths relying on the scenario.

When to Select GRUs Over LSTMs or Different Fashions

Each GRUs and LSTMs are recurrent neural networks used for the processing of sequences, and are distinguished from one another by each complexity and computational metrics.

Their simplicity, that’s, the less parameters, makes GRUs prepare sooner and use much less computational energy. They’re due to this fact extensively utilized in use instances the place velocity overshadows dealing with massive, complicated recollections, e.g., on-line/stay analytics.

They’re routinely utilized in functions that demand quick processing, reminiscent of stay speech recognition or on-the-fly forecasting, the place fast operation and never a cumbersome evaluation of knowledge is important.

Quite the opposite, LSTMs help the functions that may be extremely dependent upon fine-grained reminiscence management, e.g. machine translation or sentiment evaluation. There are enter, overlook, and output gates current in LSTMs that improve their capability to course of long-term dependencies effectively.

Though requiring extra evaluation capability, LSTMs are usually most well-liked for addressing these duties that contain intensive sequences and sophisticated dependencies, with LSTMs being professional at such reminiscence processing.

Total, GRUs carry out greatest in conditions the place sequence dependencies are reasonable and velocity is a matter, whereas LSTMs are greatest for functions requiring detailed reminiscence and complicated long-term dependencies, although with a rise in computational calls for.

Way forward for GRU in Deep Studying

GRUs proceed to evolve as light-weight, environment friendly elements in fashionable deep studying pipelines. One main development is their integration with Transformer-based architectures, the place

GRUs are used to encode native temporal patterns or function environment friendly sequence modules in hybrid fashions, particularly in speech and time sequence duties.

GRU + Consideration is one other rising paradigm. By combining GRUs with consideration mechanisms, fashions acquire each sequential reminiscence and the flexibility to give attention to essential inputs.

These hybrids are extensively utilized in neural machine translation, time sequence forecasting, and anomaly detection.

On the deployment entrance, GRUs are perfect for edge units and cell platforms on account of their compact construction and quick inference. They’re already being utilized in functions like real-time speech recognition, wearable well being monitoring, and IoT analytics.

GRUs are additionally extra amenable to quantization and pruning, making them a stable alternative for TinyML and embedded AI.

Whereas GRUs might not change Transformers in large-scale NLP, they continue to be related in settings that demand low latency, fewer parameters, and on-device intelligence.

Conclusion

GRUs provide a sensible mixture of velocity and effectivity, making them helpful for duties like speech recognition and time sequence prediction, particularly when sources are tight.

LSTMs, whereas heavier, deal with long-term patterns higher and swimsuit extra complicated issues. Transformers are pushing boundaries in lots of areas however include greater computational prices. Every mannequin has its strengths relying on the duty.

Staying up to date on analysis and experimenting with completely different approaches, like combining RNNs and a focus mechanisms will help discover the precise match. Structured packages that mix principle with real-world knowledge science functions can present each readability and course.

Nice Studying’s PG Program in AI & Machine Studying is one such avenue that may strengthen your grasp of deep studying and its position in sequence modeling.